A single undetected defect can bring an entire enterprise system to a halt, leading to missed deadlines, failed deployments, security breaches, and frustrated users, which are not just technical issues. They translate directly into revenue loss and reputational damage, yet many organizations still treat software testing as a final checkpoint instead of a continuous business safeguard.

As enterprises accelerate digital transformation, software systems are becoming more complex, interconnected, and release driven. While users expect flawless performance, today’s applications function within interconnected ecosystems that include cloud platforms, external APIs, mobile devices, and legacy infrastructure.

In highly connected systems like these, a minor breakdown can trigger broader consequences across the organization, including:

- Disruptions to core business operations

- Increased compliance and regulatory risks

- Loss of customer trust and brand credibility

Quality assurance is not limited to identifying defects before launch. When implemented correctly, QA testing enables enterprises to release faster without compromising stability or security.

For modern organizations adopting agile and DevOps practices, testing must evolve beyond manual checks and isolated test cycles. Enterprises need structured processes, the right mix of manual and automated testing, and testing strategies aligned with business goals.

This enterprise guide to software testing and quality assurance explores how organizations can build resilient, scalable, and high-quality software systems. It covers essential concepts, testing types, methodologies, tools, challenges, and best practices to help enterprises move from reactive testing to proactive quality management.

Quality Assurance vs Quality Control

Quality Assurance and Quality Control are both essential to delivering reliable enterprise software, but they address quality from different perspectives. Quality Assurance focuses on establishing the proper foundation for building software correctly, while Quality Control concentrates on verifying that the finished product meets expectations.

Quality Assurance is concerned with the processes used to develop software. It emphasizes defining standards, best practices, and workflows that guide teams throughout the development lifecycle.

Quality Assurance is embedded into the software lifecycle from the moment requirements are defined, carrying through design, development, and testing. Its primary objective is to reduce defects by guiding teams to follow clearly established processes. For large organizations, QA testing enables scalable operations, improves team coordination, and helps meet governance and compliance requirements.

Quality Control, by contrast, focuses on the software product itself. It involves testing and inspecting the application to identify defects once development is underway or complete. QC activities validate whether the software behaves as intended and meets functional, technical, and business requirements. This step is critical in enterprise systems where failures can impact multiple departments, customers, or integrations.

Rather than competing practices, QA and QC work best together. QA reduces the likelihood of defects by strengthening development practices, while QC ensures that remaining issues are detected before release. A balanced approach enables enterprises to deliver stable, secure, and high-quality software at scale.

In conclusion, Quality Assurance builds quality into the process to prevent defects, while Quality Control validates the final product to catch issues, together ensuring dependable and scalable enterprise software delivery.

| Aspect | Quality Assurance (QA) | Quality Control (QC) |

|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Benefits of Software Testing and Quality Assurance

Software Testing

-

Early Detection of Defects

Software testing enables teams to identify functional, performance, and security issues during the development lifecycle rather than after release. Early detection reduces the risk of failures in live environments and enables teams to resolve the problems before they affect customers or operations.

-

Better Software Reliability

Testing ensures applications perform consistently under real-world conditions such as high user traffic, heavy data loads, and complex system integrations. Software testing evaluates how systems behave across various scenarios, helping enterprises ensure stability and reliability in applications that support essential business processes.

-

Reduced Business and Operational Risk

By catching issues early, software testing minimizes the risk of downtime, system outages, and service disruptions. A proactive testing strategy supports uninterrupted business operations, lowers financial exposure, and prevents issues that could negatively affect customer relationships or regulatory requirements.

-

Enhanced User Experience

Validating functionality, usability, and performance ensures that the software aligns with user expectations. Through usability, performance, and functional validation, testing enhances the user experience, leading to smoother interactions and higher user satisfaction.

Quality Assurance

-

Improved Product Quality

Through built-in quality checks across workflows, QA testing enables products and services to meet or exceed expected standards. This leads to higher reliability, fewer production defects, and more substantial alignment with customer expectations, thereby enhancing the overall value delivered by the software.

-

Reduced Costs and Wastage

By identifying issues early in the development lifecycle, QA helps prevent defects from escalating into major problems later. This reduces rework, lowers support and return costs, and eliminates wasted effort, ultimately saving money and improving resource efficiency.

-

Consistency in Processes

QA creates and enforces clear standards, documentation, and procedures that teams follow consistently. This process consistency reduces output variability, improves predictability, and makes it easier to scale operations, onboard new staff, and maintain quality across products and releases.

-

Compliance with Standards and Regulations

Quality Assurance helps enterprises ensure their processes and products adhere to international quality standards and industry regulations. In addition to facilitating market expansion and boosting trust in governance and oversight procedures, compliance protects businesses from government-imposed fines.

Types of Software Testing and Quality Assurance

-

API Testing Services

API testing services help verify the functionality, reliability, security, and performance of application programming interfaces that enable system-to-system interaction. The testing services ensure that APIs function properly when processing requests and responses, handling authentication, and handling errors, while maintaining data accuracy. API testing services are a crucial component of contemporary enterprise systems because they help verify third-party system integrations, identify buggy dependencies, and prevent failures that could affect applications.

-

Automated Testing Services

The automated testing services use tools and platforms such as Selenium, Appium, and Katalon to run tests efficiently. Automation of testing helps accelerate testing cycles, reduce manual effort, and maximize test coverage across features and environments. Running automated tests on builds helps enterprises quickly identify regressions, supports continuous integration, and helps maintain quality during frequent releases.

-

Database Testing Services

Database testing services ensure the accuracy, integrity, and consistency of data stored in databases. Database testing services validate database schemas, queries, transactions, and stored procedures to ensure data is handled correctly during insert, update, delete, and migration operations. It also helps identify performance issues, deadlocks, and data inconsistencies.

-

Functional Testing Services

Functional testing validates that software works as expected based on the business requirements and use cases. It examines the software’s functionality through all the user interfaces, APIs, databases, security features, and processes. Functional testing services verifies that functions perform correctly across different scenarios, helping companies ensure that the software meets both technical standards and business requirements before going live.

-

Manual Testing Services

By analyzing software through its basic functionality and user interaction, manual testers can evaluate its effectiveness (functional correctness and usability). During manual testing, testers will review applications to identify edge cases and issues that automated testing may miss. Manual test services are often used in exploratory testing to validate user interface elements, explore all test paths and cases that require human judgment to verify that the application has met a user’s requirements and expectations.

-

Mobile App Testing Services

iOS and Android applications are validated by mobile testing services to ensure consistent performance, usability, and functionality. Device compatibility, operating system versions, screen resolutions, and different network conditions are all tested. Mobile app testing services help identify issues with application responsiveness, navigation, and device behavior, enabling companies to deliver trustworthy mobile applications.

-

Performance Testing Services

Performance testing services evaluate how applications perform under normal, peak, and stress conditions. Using techniques such as load, stress, and endurance testing, these services identify performance bottlenecks, response-time issues, and scalability limitations. Performance testing ensures applications are responsive, stable, and sufficiently robust to withstand real-world conditions without degrading or failing.

-

Security Testing Services

Security Testing Services help identify vulnerabilities that may allow unauthorized users to access an application or site, thereby exposing the application, the site, and the organization. Security testing evaluates authentication, authorization, data protection, and attack vectors to safeguard sensitive data, maintain compliance, and preserve application integrity and confidentiality.

-

Usability Testing Services

Usability testing services evaluate an application’s ease of use by analyzing navigation, layout, accessibility, and user experience. By improving usability, organizations can increase adoption rates, enhance satisfaction, and ensure applications are intuitive and efficient for their intended audience.

| Testing Type | Primary Focus | Key Purpose | Enterprise Value |

|---|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Difference Between White Box, Black Box, and Grey Box Testing Techniques

White-box, black-box, and grey-box testing are three core testing techniques used to validate software from different perspectives. Each approach focuses on a distinct level of system visibility and plays a specific role in ensuring application quality, security, and reliability.

White box testing is the process of understanding an application’s internal logic by giving testers complete visibility into the code. White-box testing is widely used in unit and integration testing to identify errors in code logic, security, and performance. White-box testing is a highly effective process for improving code quality, but it requires technical knowledge.

Black box testing is performed on the software solely from the perspective of user or system interaction, without knowledge of the internal code or design. The test engineers are concerned with inputs, outputs, and expected behavior as specified in the requirements. Black-box testing is commonly used in functional, system, and acceptance testing to verify that the application aligns with business and user expectations. Black-box testing identifies missing functionality, usability, and integration problems, but does not reveal internal code-related issues.

Grey box testing combines elements of both white-box and black-box testing. Testers have partial knowledge of the system’s internal workings, including architecture diagrams, database schemas, and API specifications. This approach allows for a more informed test design while still validating behavior from an external perspective. Grey box testing is beneficial for integration testing, security testing, and API validation in complex enterprise systems.

| Aspect | White Box Testing | Black Box Testing | Grey Box Testing |

|---|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Software and QA Testing Process

The software and QA testing process is a structured sequence of activities designed to ensure applications meet business, technical, and quality expectations before release. In enterprise environments, this process helps manage complexity, reduce risk, and maintain consistency across teams, systems, and delivery cycles.

1. Test Planning

The QA team will analyze business requirements, technical documents, and project objectives during this phase of testing to establish an overall testing strategy. In completing the overall testing strategy, the QA team will perform the following activities:

- Identify the scope of the testing

- Estimate how much time it will take run the tests

- Identify the types of testing, tools, and resources that will be used during the testing phase

By creating accurate test plans, development, QA, and business objectives will be aligned, and the plans will account for enterprise-specific factors such as compliance requirements and integration complexity.

2. Test Design and Preparation

During the test design and preparation phase, the QA teams develop test cases, scenarios, and data based on requirements, user stories, and system specifications. Otherwise, if your Test Environment has not been adequately prepared for Production, you might encounter issues when running tests in your Production Environment or when verifying it against real-world usage.

3. Test Execution

Test execution is the process of running manual and automated test cases to verify the functionality, performance, security, and usability of applications. At this point, test execution in enterprise environments spans multiple cycles and environments to ensure overall system stability. At the same time, defects are documented with details such as severity, priority, and reproduction steps.

4. Defect Reporting and Tracking

Defect reporting and tracking are vital for documenting and communicating discovered defects in a structured manner. Testers use defect-reporting and tracking tools to report defects, including detailed information such as descriptions, screenshots, logs, and steps to reproduce. Once the defects are analyzed, they are given to the development team for closure. Efficient defect tracking, coupled with maintenance, enables defect resolution and closure within agreed timelines. In most systems, defect tracking is used to prevent unrepaired defects from affecting the release or production environment.

5. Retesting and Regression Testing

Once defects are fixed, retesting is performed to confirm that the issues have been resolved correctly. Regression testing ensures that new changes have not introduced bugs into the existing processes. This is a critical phase, especially in an agile, continuous-delivery setup, where multiple changes occur regularly. Regression testing plays a vital role in maintaining stability within enterprise systems with complex dependencies.

6. Test Reporting and Closure

The closing stage involves gathering test results, coverage information, defect metrics, and quality insights to confirm that testing objectives are met before release. Test closure also includes documenting lessons learned, test artifacts, and opportunities for improvement in future cycles. In enterprise environments, this report supports informed decision-making, audit readiness, and continuous enhancement of testing processes.

A well-defined software and QA testing process enables enterprises to deliver stable, secure, and high-quality software with greater confidence and predictability.

Testing Methodologies and Approaches

Testing methods and techniques describe the approach, timing, and purpose of testing across the software development lifecycle. In enterprise environments, testing is not limited to execution; it also includes development environments, testing phases, and business critical functions.

1. Core Testing Methodologies

These methodologies define when testing is introduced and how it aligns with development activities.

-

Agile Methodology

Agile testing is an iterative and continuous process that takes place in short development cycles called sprints, where testing begins early and runs in parallel with development. As requirements change, testing also evolves to provide quick feedback, identify bugs early, and enable continuous improvement through frequent enterprise releases.

-

Waterfall Methodology

Waterfall is a sequential model of software development, where each phase is carried out in sequence, with testing performed only after development. This model of software development is most appropriate for projects with defined, stable requirements, structured documentation, timelines, and validation.

-

V-Model (Verification and Validation)

The V-Model extends the Waterfall model, with each development phase matched to a testing phase, ensuring verification and validation activities are planned early. By aligning development and testing phases, the model improves traceability, reinforces validation practices, and suits enterprise systems that demand strict compliance and quality control.

-

DevOps and Continuous Testing

With the advent of DevOps and continuous testing, automated tests are incorporated into the CI/CD process to support testing across the various phases of development and deployment in the enterprise, improving quality and speed.

-

Spiral Model

The Spiral model follows an iterative, risk-driven development process, with testing conducted in each iteration to identify, evaluate, and mitigate technical and business risks. This model, which incorporates both iterative development and risk management, is most suitable for large, complex business-related projects, where needs are subject to constant change, and risks must be closely monitored.

2. Levels of Testing

Levels of Testing provide an organized process for validating software quality through staged testing, where all aspects of individual components of the completed software are tested before testing the entire system. Performing staged tests allows defects to be separated from the overall product before any production-level releases.

- Unit Testing is the first level of testing and focuses on validating individual functions, methods, or components in isolation. It is typically performed by developers to ensure that each unit of code behaves as intended. Unit testing helps detect logic errors early, reduces debugging effort later, and provides a stable foundation for higher-level testing.

- Integration Testing verifies that different modules or components work correctly when combined. Once individual units are tested, integration testing verifies data flow, interfaces, and component interactions. This level helps identify communication issues, dependencies, or data inconsistencies that may not appear during unit testing.

- System Testing evaluates the fully integrated application as a complete system. It validates end-to-end functionality, performance, security, and usability against defined requirements. System testing ensures that the software operates correctly in an environment that closely resembles production conditions.

- Acceptance Testing is the final level of testing and confirms that the software meets business and user requirements. It often includes User Acceptance Testing (UAT), where stakeholders or end users validate real-world scenarios. Acceptance testing determines whether the application is ready for deployment and business use.

Best Practices

The best practices in software testing and quality assurance help enterprises deliver secure, reliable, and scalable software by embedding quality throughout the development lifecycle and aligning testing with business objectives, even during rapid release cycles.

Key best practices include:

- Integrate tests into CI/CD pipelines to enable continuous validation and faster feedback.

- Mix manual and automated testing to keep pace with speed and scope, while ensuring adequate consideration of usability.

- Involve QA early in the lifecycle to identify requirement gaps and prevent defects before development begins.

- Standardize testing processes and documentation to maintain consistency across teams and projects.

- Encourage collaboration across teams by aligning developers, testers, and business stakeholders on quality goals.

- Automate regression tests as much as possible to ensure the uninterrupted operation of existing features, even when the code changes frequently.

Challenges

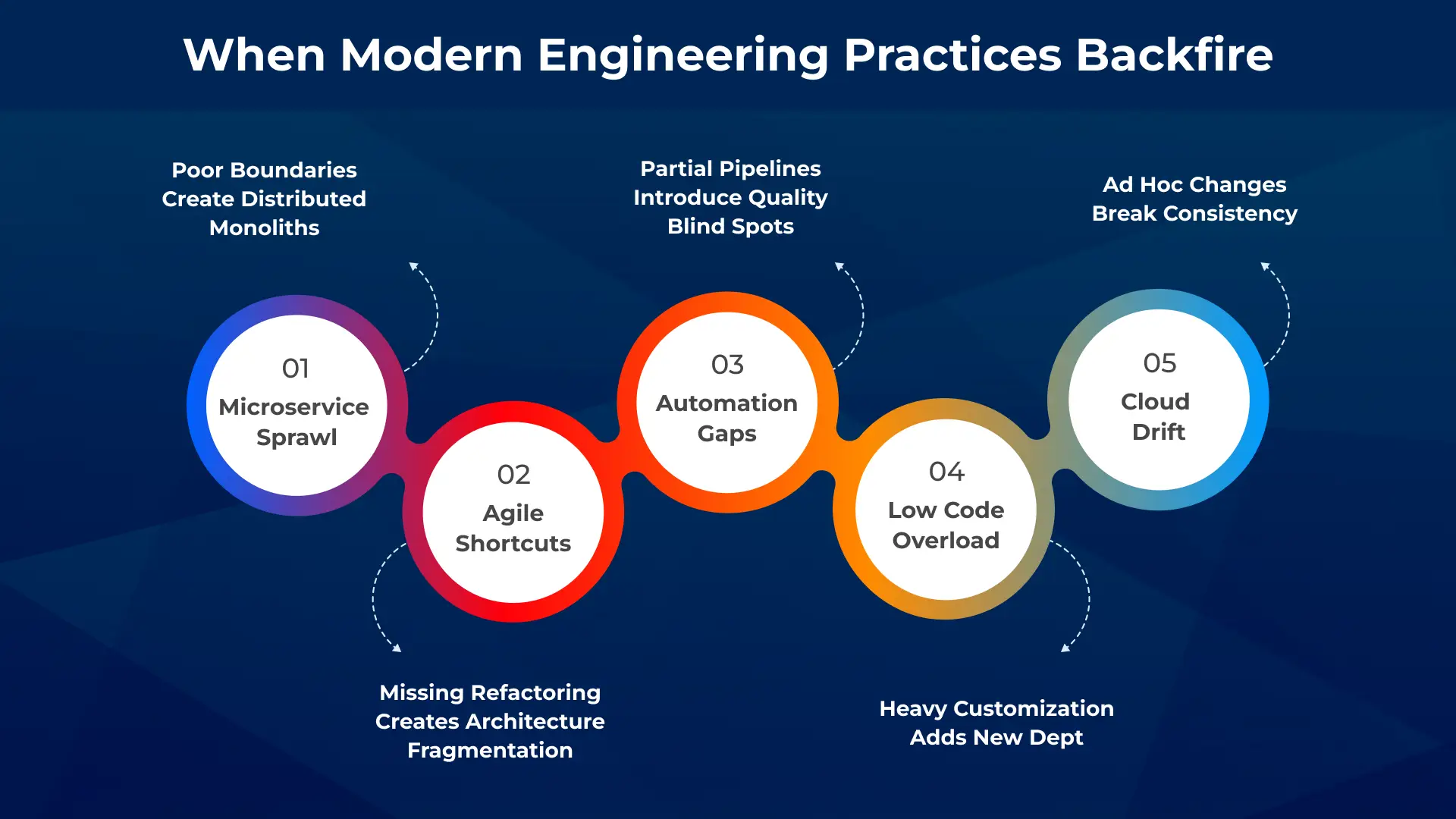

The handling of complex technology systems is a significant challenge for organizations, which includes internal systems, third-party APIs, cloud services, and legacy systems. Testing these systems comprehensively across the business is highly challenging because even a single system issue can affect others.

Another major challenge is the speed at which technology teams are developing. The introduction of Agile and DevOps methodologies has accelerated the pace of code changes and approvals, leaving little time for adequate testing. If testing isn’t automated and well prioritized, the technology team can quickly find themselves in a position of poor quality while still delivering new changes at a high frequency.

Test environments and data limitations also pose challenges, as creating production-like environments is resource-intensive, and inadequate test data can lead to incomplete validation or missed defects. Inconsistent environments increase the risk of issues surfacing after deployment.

Enterprises also face skills and resource gaps, as advanced testing techniques, automation tools, and performance or security testing require specialized expertise that can be difficult to scale across large teams.

Finally, managing test coverage and defect prioritization becomes increasingly complex at scale. With large applications and multiple releases, ensuring critical areas are adequately tested while avoiding redundant effort requires strong planning, metrics, and governance.

Addressing these challenges requires a strategic testing approach that combines process maturity, automation, skilled resources, and continuous improvement.

Tools Used for Software Testing and Quality Assurance

| Tool Name | Primary Purpose | Enterprise Use Case |

|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Conclusion

Software testing and quality assurance are no longer optional checkpoints in enterprise development; they are strategic enablers of reliability, scalability, and business continuity. As systems grow more interconnected and release cycles accelerate, organizations must shift from reactive defect detection to proactive quality engineering. By combining structured processes, intelligent automation, and continuous validation within DevOps pipelines, enterprises can reduce risk while sustaining delivery speed.

Looking ahead, the future of QA will be shaped by AI-driven testing, predictive defect analysis, self-healing test scripts, and hyper-automation across the software lifecycle. These advancements will enable teams to move from validation to anticipation, identifying risks before they impact production and optimizing quality in real-time.

For organizations aiming to stay ahead of this shift, Telliant Systems delivers enterprise-grade QA and testing solutions for modern business needs. A mature, future-ready QA strategy not only safeguards performance today but also builds the foundation for continuous innovation, stronger customer trust, and long-term digital growth.

(Revised Version 2026)

Global spending on digital transformation hit $2.58 trillion in 2025 and is expected to reach $3.9 trillion by 2027, making one reality clear: digital transformation is no longer optional. Organizations without a clear digital transformation strategy are already losing efficiency and competitive ground. Organizations that delayed modernization over the past few years are now facing higher costs, fragmented systems, and declining customer relevance.

Digital transformation (DX) refers to the strategic integration of digital technologies across business functions to improve operations, decision-making, customer engagement, and value delivery. What was once considered an innovative initiative is now a core business requirement. In this context, digital transformation strategy is no longer owned only by IT teams but has become a board-level priority.

As businesses face economic instability, complex regulations, and rapid AI developments, digital transformation should be seen as an ongoing strategic skill rather than a one-time project.

The Importance of Digital Transformation in 2026

In 2026, digital transformation is defined less by adoption and more by how well it is executed. Organizations are expected to work with connected platforms, real-time data, and automation-driven efficiency.

A scalable digital transformation strategy enables businesses to align technological investments with long-term business objectives.

Although the fundamental tenets of digital transformation have not changed, their influence has spread throughout the entire organization.

-

Improved Customer Experience

Customer expectations in 2026 are shaped by immediacy, personalization, and consistency across channels. Digital transformation enables organizations to unify customer data, automate engagement workflows, and deliver personalized experiences across web, mobile, and emerging digital platforms. For many organizations, enterprise digital transformation is critical to breaking down data silos and creating a single view of the customer.

-

Stronger Security and Data Governance

The increasing interconnectedness of digital ecosystems has made data governance and cybersecurity strategic priorities. A clear digital transformation strategy supports contemporary security frameworks like centralized identity management, zero-trust architecture, and compliance-ready systems. Enterprise digital transformation also lowers risk by replacing old infrastructure with modern platforms. These platforms support ongoing monitoring, threat detection, and meeting regulatory requirements across various industries and regions.

-

Faster and more reliable decision-making

Data-driven decision-making is no longer optional. Digital transformation enables organizations to consolidate data from multiple systems and convert it into actionable insights using advanced analytics and AI-assisted intelligence. By providing leadership teams with real-time dashboards, predictive models, and automated reporting in 2026, business digital transformation enables quicker reactions to operational risks, shifting consumer behavior, and market changes.

-

Higher Employee Productivity and Operational Efficiency

Workforces are becoming more hybrid and digitally equipped. Digital transformation streamlines internal procedures by reducing manual labor, improving system integration, and introducing intelligent automation across HR, finance, operations, and IT. By implementing a well-thought-out digital transformation strategy, businesses can increase tool availability, speed up processes, boost employee productivity, and simultaneously improve engagement and retention.

-

Sustainable Revenue Growth and Profitability

Digital transformation remains a key driver of revenue optimization and cost efficiency. Scalable platforms, automated processes, and data-led decision-making help organizations reduce operational overhead while unlocking new business models. By 2026, corporate digital transformation will be a significant factor directly facilitating the necessary agility, scalability, and long-term profitability.

Key Digital Transformation Trends Shaping 2026

Digital transformation in 2026 is clearly moving toward intelligence-led execution. Nearly 96% of CIOs have already used or plan to use AI and machine learning to support digital-first initiatives. This shows how deeply AI is now integrated into business transformation plans.

Beyond this shift, several structural trends continue to define how organizations approach digital transformation:

- An increase in the use of intelligent automation in key business operations

- Persistent use of modular and cloud-native technology architectures

- Increased emphasis on advanced analytics and unified data platforms

- Extension of remote-first and hybrid operating models

- Increased use of digital tools in customer and employee trips

What Defines a Mature Digital Transformation Strategy in 2026

As digital transformation evolves, the gap between adoption and maturity is more visible. Many organizations have implemented digital tools, but fewer have built systems that operate effectively on a scale.

A mature digital transformation strategy in 2026 is defined by the following characteristics:

1. Integration-First Architecture

Organizations move away from fragmented systems toward connected ecosystems. Enterprise applications, data platforms, and workflows are integrated to ensure consistent information flow across functions.

2. Real-Time Data and Operational Visibility

Organizations rely on real-time data pipelines and dashboards instead of delayed reporting. This supports faster decisions and improves responsiveness to operational and market changes.

2. Real-Time Data and Operational Visibility

Organizations rely on real-time data pipelines and dashboards instead of delayed reporting. This supports faster decisions and improves responsiveness to operational and market changes.

3. Cloud-Native and Modular Infrastructure

Scalability is a baseline requirement. Cloud-native architectures, supported by APIs and microservices, allows systems to evolve without disrupting core operations.

4. Embedded Intelligence Across Workflows

Advanced analytics and automation are integrated into business processes, supporting forecasting, customer interactions, risk detection, and operational efficiency.

5. Alignment Between Business and Technology Teams

Business and technology teams operate with shared goals. Technology investments are directly linked to measurable business outcomes.

6. Continuous Optimization and Adaptability

Transformation is treated as an ongoing capability. Organizations monitor performance, refine processes, and adapt to changing technologies and business needs.

Pro Tip

Before scaling any digital transformation initiative, validate how quickly data moves across systems. If access to insights is delayed or inconsistent, scaling will amplify those gaps rather than fix them.

From Strategy to Execution

In large-scale transformation initiatives, operational inefficiencies often stem from fragmented systems and limited visibility. In one such engagement delivered by Telliant Systems, addressing these challenges led to a significant improvement in how information was accessed and used.

- 80% faster visibility into case-level data through centralized dashboards

- Improved coordination across systems and workflows

- More consistent data access for teams handling high-volume operations

This highlights how improving data access and system integration can directly influence decision-making speed and operational clarity.

Conclusion

In 2026, digital transformation remains essential, as it directly affects how businesses operate, expand, and remain competitive. At the employment level, its consequences are becoming more noticeable. In fact, 57% of firms say digital efforts cause more workplace changes than cultural or physical ones. This highlights the need to embed digital capabilities deeply across processes and systems, rather than treating them as isolated projects.

A clear digital transformation strategy has become a core business discipline, aligning leadership priorities with execution and long-term growth. Organizations that approach transformation as a continuous effort are better positioned to improve productivity, manage complexity, and respond to market change.

Organizations today see cloud adoption not just as a technology upgrade, but also to accelerate innovation, modernize operations, and compete in a more digital marketplace through modern cloud technology capabilities. However, moving applications and data to the cloud, especially in environments that involve cloud app development, is still complex and operationally challenging. Without a clear strategy and a structured plan, organizations face risks like delays, budget overruns, and service disruptions.

A cloud migration roadmap is essential. It acts as a strategic framework to help transition from old infrastructure to a modern cloud environment. This process should be controlled and focused on delivering value while managing risks, resources, operational needs, and business goals.

This article presents a practical, step-by-step guide to building a cloud migration roadmap that connects technology initiatives with enterprise strategic priorities.

What is a Cloud Migration Roadmap

A cloud migration roadmap is a detailed plan outlining how an organization will migrate its applications, data, and computing workloads from legacy systems or on-premises environments to a new cloud environment. This process is carried out carefully and in an organized manner.

It sets clear goals, identifies priorities, assigns responsibilities, and outlines tasks and milestones. This structure allows teams to carry out migrations in a coordinated, predictable, and organized way.

At its core, the roadmap ensures that technical decisions support business outcomes such as cost optimization, improved performance, operational agility, and compliance.

Why a Lifecycle Perspective Is Critical to Cloud Migration

By moving tasks through planned stages, including assessment, planning, execution, stability, and optimization, a lifecycle approach sees cloud migration as an ongoing transformation program instead of a single event. This method guarantees clear business value throughout the migration process.

By viewing migration as a series of steps rather than a single significant technology change, organizations enable learning, flexibility, and ongoing improvement.

Step 1: Conduct a Thorough Assessment

The first and most critical step in a cloud migration plan would be to conduct a comprehensive assessment of the existing environment. Map dependencies, assess performance, and identify integration risks by creating an automated discovery list of servers, apps, databases, storage, networks, middleware, and connectors.

The emphasis should be on the dependency mapping to prevent errors during the migration phase. Review legacy systems for retirement or upgrades. Check licensing and support limits. Classify data based on regulatory needs and sensitivity.

The assessment should also consider skills availability across teams and identify capability gaps that may require training or external expertise. The result of this phase is a clear understanding of what exists today and what must be modernized, prioritized, or retired.

Step 2: Define Business Objectives and KPIs

Successful cloud migrations are driven by business outcomes rather than technology decisions alone. Your roadmap should clearly define why the migration is being undertaken and what value the organization expects to deliver.

Common objectives include:

- Reducing infrastructure and maintenance costs

- Increasing application performance and reliability

- Facilitating faster cycles of innovation and scalability

- Increasing security and adhering to regulations

- Assisting with new product capabilities and digital initiatives

These objectives should be measurable. Set key performance indicators. Include uptime targets, response-time thresholds, cost-reduction goals, and operational efficiency metrics. By clearly defining specific outcomes at the start, the organization makes sure that every migration decision delivers measurable business value.

Step 3: Establish Governance and Team Structure

Cloud migration demands careful planning and cross-departmental collaboration. A governance model should be developed that includes representatives from the Operations, Finance, Security, IT, and Business Leadership Departments.

Clearly state who is responsible for decision-making, risk management, expense monitoring, and confirming compliance. Team responsibility and coordination are ensured by hiring a qualified cloud transformation owner or migration program lead.

Governance checkpoints should be embedded throughout the roadmap to review alignment, progress, risk exposure, and cost performance.

Step 4: Select the Right Migration Strategy

At this stage, determine the most appropriate migration strategy for each workload or application. Not every system should be migrated in the same way.

Understanding the 7R Cloud Migration Strategies

1. Rehost

Rehost means that application workloads are moved to a cloud with minimal modifications. There is a copying of existing configurations or infrastructure patterns. The process increases migration speed while ensuring everything remains the same, with no changes required.

2. Relocate

Relocate moves entire workloads or virtual machines to a cloud provider’s infrastructure with few architectural changes. It uses cloud-hosted infrastructure while keeping existing configurations, operational processes, networking, and management models unchanged.

3. Replatform

Replatforming existing workloads by adding new platforms and features while retaining the workload’s primary architecture will improve performance, scalability, and compatibility with a cloud environment. This method allows for gradual modernization during workload migration while maintaining much of the technical debt associated with the workload.

4. Refactor

Refactor redesigns application components or code to use cloud-native structures, such as microservices and containerization. This change improves scalability, resilience, and maintenance. It also opens the door to better automation and long-term innovation.

5. Repurchase

Repurchase replaces SaaS or cloud-based platforms with options that have similar or improved features compared to outdated apps. This update reduces maintenance costs, simplifies licensing, and helps with modernization. It also makes it faster to use standard features.

6. Retire

Retirement removes old systems that no longer benefit the business. It eliminates assets to cut operational costs, reduce security risks, and reduce technical debt. This process also makes migration easier.

7. Retain

Retain keeps specific workloads or systems on-site or in hybrid environments because of latency, compliance, integration, or business needs. It supports coexistence while allowing surrounding services to use the cloud and creates options for future modernization.

This selective approach prevents unnecessary modernization efforts and ensures migration efforts are focused where they create the most business value.

Step 5: Plan Migration Phases and Prioritization

Instead of one big move, cloud migration should occur in planned stages. Workloads should be categorized into migratory waves based on their dependency, complexity, and importance. This approach lowers risk while ensuring that services continue to operate as intended.

A phased plan typically includes:

- Pilot program to test procedures and technologies for low-risk workloads.

- Phase of core migration for significant sequenced wave applications.

- Stabilization phase to monitor performance and fix any issues after migration.

- Optimization phase to improve cost, scalability, and operations.

Every stage should specify acceptance standards, testing checkpoints, rollback protocols, and entry and exit criteria. This reduces downtime and allows workloads to be moved in a predictable, orderly manner that aligns with business goals.

Step 6: Select Proper Tools and Automation

Migration speed, reproducibility, and robustness improve significantly with the right tools that enable consistent execution and reduce manual effort. Automated testing, environment replication, data synchronization, dependency discovery and mapping, CI/CD pipelines, Infrastructure-as-Code, process orchestration, rollback validation, configuration management, performance testing, monitoring, and logging should all be supported by these tools.

Functions such as automated configuration management, pipeline-based deployments, and infrastructure-as-code help minimize manual intervention and ensure consistency. Further, it provides assistance with rewind functionality, validation, and quick fixes in case unforeseen issues occur during migration.

Step 7: Integrate Security and Compliance

Security and compliance need to be part of the roadmap from the start; they should not be added later.

Key security activities include:

- Classifying data and mapping regulatory obligations.

- Defining encryption for data in transit and at rest.

- Implementing identity and access management policies.

- Establishing logging, monitoring, and incident response workflows.

Integrating security early reduces rework, minimizes operational risk, and strengthens organizational trust in the migration process.

Step 8: Execute Migration and Validate

After setting up the planning and governance structure, migration execution begins in accordance with the migration phase plan.

These validation activities include:

- Testing applications’ functions in the operational environment.

- Benchmarking of Performance on Pre-Migration Bases.

- Verification of security and access controls.

- User Acceptance Testing & Operational Readiness Review Responsibilities

Rollback plans must be prepared for every migration to maintain business continuity in the face of unexpected failures.

Step 9: Post-Migration Optimization and Continuous Improvement

Post-migration optimization ensures workloads run well in the cloud. It also helps them operate efficiently, securely, and without wasting money. After stabilization, organizations should review performance metrics, resource use, and workload behavior. This will help identify chances for improvement, such as adjusting instance sizes, fine-tuning autoscaling policies, increasing storage efficiency, and modifying network setups.

Cost governance should be reinforced through monitoring, budgeting controls, and usage analysis. Security controls, access policies, and compliance requirements must be reassessed in the new environment.

Continuous improvement enables teams to modernize further, adopt cloud-native services, strengthen cloud technology capabilities, and enhance reliability and long-term business value.

From Planning to Execution: Realizing the True Value of Cloud Migration

A cloud migration roadmap is an essential strategic instrument that guides an organization through a complex transformation journey. By using a straightforward, lifecycle-driven method, organizations can reduce risk, manage costs, maintain operational continuity, and gain ongoing business value from cloud adoption.

At Telliant Systems, we help enterprises architect, plan, and execute cloud migration programs that align technology modernization with business outcomes. Our consulting and engineering teams work closely with organizations to design migration roadmaps that are practical, measurable, and tailored to their strategic priorities.

Digital platforms have become an essential part of everyday life, allowing people to access services, information, education, healthcare, and financial systems online. However, many websites are still difficult to use for individuals with visual, auditory, cognitive, or motor impairments. When websites are not developed with accessibility in mind, users with assistive technologies, such as screen readers, face difficulties accessing the content.

This is why many organizations are working to ensure WCAG compliance, ensuring their platforms are accessible to and usable by all. These are well-known website accessibility guidelines that help organizations create websites that are usable across different platforms and technologies. Improving web accessibility compliance benefits people with disabilities and enhances usability for all.

1. What Is WCAG?

The Web Content Accessibility Guidelines (WCAG) are standards for accessible digital products and services, developed by the World Wide Web Consortium (W3C), specifically through its Web Accessibility Initiative (WAI). Organizations seeking WCAG conformance adhere to these WCAG accessibility guidelines to make digital products and services accessible to users with different physical, mental, and sensory limitations.

The WCAG accessibility standards are generally applicable to a range of digital spaces and technology infrastructures.

They are relevant for:

-

Websites

Public portals and e-commerce websites must adhere to WCAG guidelines to ensure that users with disabilities can access and use their services.

-

Web Applications

Interactive applications such as dashboards, SaaS platforms, and enterprise software must follow WCAG accessibility guidelines to ensure accessibility across complex user interfaces and dynamic components.

-

Mobile Applications

Mobile applications on devices such as smartphones and tablets need to be WCAG-compliant so that the user interface and content are easily usable with screen readers and accessibility settings.

-

Digital Platforms

Government portals, learning platforms, and digital service ecosystems often enforce WCAG compliance requirements as a baseline requirement for inclusive user experiences.

2. Understanding WCAG Versions

Driven by advances in technology and accessibility research, WCAG continues to introduce updates that support organizations in improving accessibility and managing new digital accessibility challenges. The evolution of accessibility guidelines is important to ensure that various digital platforms and complex user interfaces are accessible across various devices and assistive technologies.

The major versions of WCAG include the following:

-

WCAG 1.0 (1999)

The first official version of the guidelines introduced foundational accessibility concepts for early web environments. WCAG 1.0 primarily focused on static HTML content and specified checkpoints to enhance accessibility for screen readers and assistive technologies available at the time. Although this version is historically important, it has become obsolete with the introduction of modern web applications and content frameworks.

-

WCAG 2.0 (2008)

WCAG 2.0 provided a technology-independent model expected to accommodate evolving web technologies and interactive digital media. It defined the fundamental accessibility principles of Perceivable, Operable, Understandable, and Robust, which are still used to define modern accessibility guidelines in WCAG. Many international accessibility regulations still reference WCAG 2.0 as a baseline framework for website accessibility standards.

-

WCAG 2.1 (2018)

The release of WCAG 2.1 expanded the existing framework to address accessibility challenges associated with mobile devices, touch interfaces, and low vision users. Organizations that want to follow the WCAG guidelines can take advantage of the new WCAG 2.1 accessibility guidelines for mobile devices, keyboards, and assistive technologies.

-

WCAG 2.2 (2023)

The latest update includes guidelines to improve the accessibility of digital content for users with cognitive disabilities and complex interaction patterns. Achieving WCAG 2.2 Compliance has introduced new requirements that improve the usability of navigation and authentication, and the accessibility of the interactive interface, making it stronger than the WCAG Compliance requirements for the latest digital platforms.

4. Benefits of WCAG Compliance

Some benefits for organizations that use digital platforms in their businesses and attain WCAG compliance include improved user interface, user experience, and customer satisfaction. Organizations that adopt website accessibility standards can reach a wider audience than those that do not, as individuals with disabilities can interact with digital content more effectively.

Organizations are also focusing on web accessibility compliance because improving accessibility has been shown to positively impact mobile usability, search engine optimization, and the overall clarity and consistency of the user interface. In addition, meeting established WCAG compliance requirements helps organizations reduce legal risks associated with accessibility lawsuits and regulatory violations in jurisdictions that require accessible digital services.

4. The Four Core WCAG Principles

The entire WCAG model is based on four basic principles for accessible design for users with disabilities.

-

Perceivable

Digital content must be made available to users through the senses they can perceive. For example, images should include alternative text, videos should include captions, and text should have good color contrast so users with low vision can easily read it. Following these practices supports inclusive design and helps organizations meet modern WCAG accessibility standards.

-

Operable

The interfaces should enable the user to interact with navigation elements and functionality using various input methods, such as the keyboard, voice commands, and assistive technology. All interactive elements, such as menus, forms, and buttons, should be accessible via the keyboard, enabling users to use the website effectively without a mouse and ensuring WCAG compliance.

-

Understandable

Information and user interface behavior must be predictable, readable, and logically structured so that users can easily understand digital interactions. Users should be able to complete tasks easily through clear form instructions, predictable interface behavior, and meaningful error messages, which align with established WCAG accessibility guidelines.

-

Robust

The digital content must be compatible with assistive technologies and new web browsers, allowing the user to access the information on various devices and environments. Using appropriate HTML structure, accessible coding, and screen reader components will ensure long-term accessibility and usability of the content and its alignment with the WCAG accessibility guidelines.

5. WCAG Conformance Levels

WCAG defines three conformance levels that indicate the degree to which a website satisfies accessibility success criteria defined in the guidelines.

| Conformance Level | Description | Accessibility Impact |

|---|---|---|

|

|

|

|

|

|

|

|

|

Most organizations aim for Level AA because it satisfies widely accepted website accessibility standards while maintaining practical development feasibility.

6. Common WCAG Compliance Issues

- Images that lack alternative text are not read to users with visual impairments by screen readers.

- Poor contrast between text and background makes it difficult for users with low vision to read the content.

- Navigation elements that cannot be accessed through keyboard input create barriers for users who rely on assistive devices.

- An improper heading structure makes it difficult for screen readers to interpret the page hierarchy correctly.

- Forms without clear labels or instructions prevent users from completing tasks effectively.

- Buttons and links without descriptive labels confuse screen readers and reduce accessibility.

Pro Tip:

To maintain compliance with the Web Content Accessibility Guidelines, organizations should integrate accessibility testing into their regular development workflow. Teams can use tools such as axe DevTools, WAVE, and Lighthouse to identify accessibility issues early and ensure that websites remain aligned with WCAG requirements as new features and updates are introduced.

7. How to Test a Website for WCAG Compliance

Testing accessibility requires a combination of automated tools, manual testing procedures, and assistive technology validation methods. Automated accessibility scanners help identify structural issues such as missing alt text, color contrast violations, and invalid HTML attributes that may affect WCAG compliance. However, automated testing alone cannot identify every accessibility problem because some usability issues require human evaluation.

Manual testing plays a critical role in validating navigation, interactive components, and user workflows across different accessibility scenarios. Accessibility specialists often test keyboard navigation, screen reader compatibility, and focus management to ensure full compliance with web accessibility standards.

The use of automated scanning, usability evaluation, and accessibility audit helps organizations comply with changing WCAG guidelines and requirements.

8. How to Achieve WCAG Compliance

- A comprehensive accessibility audit must be conducted to assess the website’s compliance with WCAG accessibility standards.

- The accessibility issues must be identified, and the remediation activities for the website’s design and development components must be prioritized.

- The website’s color contrast, navigation, form, and keyboard accessibility also require enhancement.

- A structured WCAG compliance checklist should be followed to facilitate accessibility implementation across all pages and features of the website.

- Accessibility testing should be conducted to ensure compliance with all WCAG guidelines.

- Accessibility practices should be incorporated into design and development processes to ensure long-term compliance with web accessibility guidelines.

9. WCAG Compliance and Legal Regulations

Across global accessibility regulations, WCAG is widely referenced as the primary guideline, and government agencies and courts often require organizations to demonstrate compliance when evaluating websites. In the United States, accessibility lawsuits often reference WCAG success criteria when interpreting requirements under the Americans with Disabilities Act.

Similarly, the European Union Web Accessibility Directive requires public sector websites to adhere to internationally accepted accessibility standards, such as those set out in the WCAG guidelines. Many jurisdictions require compliance with WCAG 2.1 or WCAG 2.2 as a component of their digital accessibility regulations and policies. Following these internationally accepted WCAG accessibility guidelines helps organizations reduce legal risk while ensuring accessible digital services.

10. Best Practices for Accessible Website Design

- Incorporate inclusive design principles early in the product development cycle to achieve better accessibility outcomes.

- Use clear typography, proper color contrast, and a consistent layout to facilitate accessible navigation.

- Make sure that all forms, menus, and buttons have descriptive labels and provide focus indicators.

- Organize headings properly so that assistive technologies can understand the page hierarchy.

- Include captions and transcripts of multimedia content to facilitate users with hearing disabilities.

- Adhere to contemporary accessibility guidelines from WCAG to ensure continued compliance with accessibility standards.

11. Maintaining WCAG Compliance Over Time

Accessibility should be considered an ongoing operational activity rather than just a one-time compliance activity. As websites are updated and new features are added, along with design and content updates, accessibility problems may creep back in without proper governance mechanisms in place. It is recommended that periodic audits of websites be conducted to ensure compliance with updated accessibility guidelines and to prevent problems that have been resolved earlier from creeping back into the websites.

Continuous monitoring tools can be used to identify accessibility issues and support ongoing WCAG compliance. It is also important to use accessibility testing to ensure that new code does not break WCAG compliance requirements during continuous integration. Maintaining alignment with evolving standards, such as WCAG 2.2 compliance, ensures that digital platforms remain inclusive as technology and accessibility research continue to advance.

12. Conclusion

Accessibility is no longer a niche technical concern but a fundamental aspect of inclusive digital experiences. Organizations adopting a structured accessibility approach aligned with WCAG can now build platforms that remain accessible to a broader audience. By using the WCAG compliance method, organizations can not only ensure usability but also avoid legal risks and align with international website accessibility standards.

Businesses that integrate accessibility into design systems, development workflows, and quality assurance processes achieve stronger long-term web accessibility compliance. As digital ecosystems continue evolving, following established WCAG accessibility guidelines and meeting defined WCAG compliance requirements will remain essential for organizations committed to equitable and inclusive digital access.

While the adoption of digital technology has made data more accessible, it has also led to data fragmentation across systems that lack standardized communication protocols. This creates significant barriers to seamless healthcare data exchange across clinical, administrative, and patient engagement platforms.

Healthcare Interoperability enables clinical and non-clinical data to move securely across applications, systems, and institutional boundaries without compromising context, accuracy, or compliance requirements. In the absence of an effective data integration framework, healthcare providers are likely to face delayed diagnoses, repeated procedures, incomplete patient records, and inefficient administrative processes, all of which impact patient outcomes and organizational performance.

Levels of Interoperability in Healthcare Integration

Healthcare Interoperability is structured across multiple operational levels that determine how healthcare data exchange occurs between systems and how effectively that data is interpreted and utilized across integrated digital health environments.

-

Foundational Interoperability

Foundational interoperability enables data exchange only, not communication that assumes a usable structure. It does not guarantee processing or usability, but it ensures connectivity between the integrated platforms.

-

Structural Interoperability

This level standardizes the format, syntax, and organization of exchanged data so that information fields remain consistent and properly aligned during transmission between systems.

-

Semantic Interoperability

This level maintains the meaning and context of the transmitted data, enabling the receiving application to correctly interpret medical terminology, diagnoses, and treatments without requiring manual interpretation.

-

Organizational Interoperability

This level incorporates governance policies, regulatory frameworks, and workflow alignment to support coordinated Healthcare Integration across institutions, payer networks, and care-delivery environments.

Each interoperability level strengthens Data Integration by supporting accurate, compliant, and scalable healthcare data exchange across interconnected healthcare systems.

Evolution of Healthcare Data Exchange Standards

As healthcare technology adoption increased, the need for standardized messaging protocols became essential to support interoperability across heterogeneous environments. Health Level Seven (HL7) was introduced as a set of international standards for the structured exchange of clinical data between healthcare applications. HL7 Version 2 supports standardized messaging for transmitting patient admission data, laboratory results, discharge summaries, and billing information, while HL7 Version 3 improves semantic interoperability through a more structured data modelling approach.

Clinical Document Architecture is an HL7 standard that enables the representation of electronic clinical documents using a common framework. Traditional HL7 implementations relied on message-based integration, which required custom interface engines and manual data mapping to maintain interoperability across systems.

HL7 for Healthcare Data Integration

HL7 continues to play a foundational role in Healthcare Interoperability by enabling message-driven communication between clinical systems that operate within hospital networks and enterprise healthcare environments. The HL7 architecture is based on structured message segments that represent patient demographics, diagnostic results, medication orders, treatment plans, and administrative information.

Healthcare organizations frequently use HL7 messaging for integrating electronic health record systems with laboratory platforms, radiology imaging systems, pharmacy databases, and billing applications.

Common HL7 message types that support healthcare data exchange include:

- Admission Discharge Transfer messages

- Order Entry communications

- Observation Reporting messages

Maintaining consistent data integration across evolving healthcare infrastructures often requires dedicated interface management strategies that ensure message integrity and semantic consistency.

FHIR for Modern Healthcare Integration

FHIR represents a significant advancement in healthcare integration by enabling API-driven healthcare data exchange through a resource-based architecture that aligns with modern web standards. Contrary to the use of message-oriented communication, FHIR breaks down clinical data into modular resources like patient records, medications, diagnostic results, procedures, and care plans that can be retrieved via RESTful APIs.

FHIR also supports common data formats, including JSON and XML, which allow healthcare developers to more easily build bridges between clinical systems, mobile health apps, cloud platforms, analytics systems, and remote monitoring devices. This approach enhances interoperability across distributed healthcare ecosystems by enabling real-time data retrieval and scalable deployment across enterprise environments.

Healthcare organizations implementing FHIR-based data integration can improve patient engagement initiatives, streamline care coordination workflows, and support advanced analytics capabilities that rely on consistent clinical datasets.

HL7 vs FHIR: Comparative Analysis

Both HL7 and FHIR contribute to Healthcare Interoperability by addressing different integration requirements across healthcare environments. HL7 remains suitable for internal enterprise messaging, while FHIR supports modern application-level integration across cloud-based ecosystems and patient-facing platforms.

Healthcare Data Integration Architecture in Practice

Healthcare Interoperability in the enterprise world relies on a structured Data Integration architecture that supports standardized healthcare data exchange between legacy and new healthcare systems. Interface engines or integration middleware facilitate HL7 message routing, transformation, and connectivity between electronic health records, lab systems, radiology systems, and billing systems.

API gateways that provide secure access to clinical resources from mobile apps, cloud analytics platforms, and remote monitoring devices enable healthcare integration with FHIR. Data transformation layers enable compatibility between FHIR resources and HL7 messaging formats, and Master Patient Index systems enable appropriate patient identity resolution.

Implementation Challenges in Healthcare Interoperability

Healthcare organizations pursuing enterprise-wide healthcare integration often encounter technical and operational barriers that affect data integration outcomes. Legacy clinical systems may lack support for modern interoperability standards, requiring additional middleware or interface engines to enable healthcare data exchange across applications.

Semantic inconsistencies between data models may introduce interpretation errors, while the need for data privacy and security, as mandated by regulations, requires the enforcement of governance policies during interoperability. Scalability in integration may also become an issue as healthcare organizations increase their digital health services across various facilities.

To address these issues, there is a need for implementation strategies that incorporate technical architecture with compliance and organizational goals.

Best Practices for Scalable Healthcare Data Exchange

Healthcare providers seeking to implement sustainable Healthcare Interoperability initiatives should consider the following best practices to ensure scalable and secure healthcare data exchange.

- Adopt standards-based Healthcare Integration strategies that emphasize scalability, data management, and compatibility.

- Use middleware tools to enable message processing and routing in a multi vendor environment.

- Implement API gateways to facilitate secure interactions between FHIR-enabled applications.

- Invest in semantic data normalization infrastructure to guarantee a common understanding of clinical data by integrated systems.

- Continuously evaluate interoperability performance and manage version updates of integration standards.

- Foster collaboration among stakeholders in IT and clinical domains to facilitate collective data integration efforts.

By integrating HL7 messaging with FHIR APIs, healthcare organizations can create an interoperability framework that is adaptable and innovative.

Conclusion

Healthcare Interoperability is a crucial requirement for secure, scalable data exchange across current healthcare and administrative use cases. HL7 and FHIR enable healthcare integration by supporting both legacy messaging requirements and API-based data integration. HL7 facilitates structured communications between enterprise applications while FHIR allows for real-time interoperability between applications hosted in the cloud, analytical systems, and other healthcare-related application; this was achieved by organizations using a standardized integration approach based on HL7 and FHIR to optimize their operations, meet regulatory compliance requirements, encourage greater involvement from patients in their own care, and create a sustainable data exchange.

The New Reality of Healthcare Software

Healthcare software development has moved far beyond basic digitization of patient records and administrative processes. Modern healthcare organizations use application software to store large volumes of patient information; this software enables providers to make decisions and collaborate with other organizations in real time. As healthcare providers deliver care to patients through electronic and physical channels, software also plays a significant role in patient safety, operational efficiency, and regulatory compliance.

The growing reliance on digital systems has also heightened the risks to healthcare data security. Cyber-attacks target Healthcare Organizations frequently because of the sensitive nature of their protected health information and its long-term value. Therefore, a secure method of developing applications involving the Healthcare Organization must encompass many areas beyond just perimeter-based security. It must also include the application’s Infrastructure, Application Logic/Data, storage, and Integration layers.

At the same time, healthcare interoperability has become a foundational requirement rather than a future goal. The effectiveness of clinical outcomes, care coordination, and patient experience now depends on how accurately and efficiently systems exchange data across organizational and technical boundaries.

Several industry-wide shifts are transforming the design and deployment of modern healthcare software platforms.

- The rapid expansion of digital health platforms, telemedicine solutions, and mobile applications requires secure healthcare application development to ensure safe access to patient data across multiple environments and devices.

- The industry-wide push for standardized data exchange via FHIR and HL7 integration enables consistent communication among electronic health records, laboratories, imaging systems, and external partners.

- The growing complexity of healthcare system integration occurs as organizations connect cloud-native platforms with legacy clinical systems, medical devices, and health information exchanges.

Changing industry demands have altered expectations for healthcare software platforms, making it essential for performance, scalability, and usability to operate alongside strong security, compliance, and interoperability standards.

Software teams can no longer afford to prioritize speed or functionality over data protection or integration readiness. In the current healthcare landscape, software must be designed around a security-first architecture and interoperability to support resilient, compliant, and adaptable healthcare systems.

Core Pillars of Modern Healthcare Software Architecture

Modern healthcare software architecture must support far more than functional requirements. To achieve this balance, successful healthcare platforms are built on a set of foundational architectural pillars that guide design decisions across every layer of the system.

Such architectural pillars serve as interconnected principles that shape how healthcare software development addresses security, interoperability, scalability, performance, and compliance.

1. Security-First Architecture

A security-first architecture places healthcare data protection at the center of system design rather than treating it as an afterthought. Given the sensitive nature of clinical and patient data, healthcare data security must be embedded into infrastructure, application logic, and data workflows from the earliest stages of development.

Security-first healthcare software architectures emphasize strong identity controls, secure data storage, encrypted communication channels, and continuous monitoring across all system components. This approach ensures that patient information remains protected as it moves between users, applications, and integrated systems, while also reducing the risk of breaches, unauthorized access, and operational disruptions.

2. Interoperable Design

Interoperable design enables healthcare systems to exchange data accurately, consistently, and in real time across internal and external platforms. To provide a coordinated approach to health care delivery and complete visibility into patient care, health care software platforms need to enable different types of software to communicate easily with one another, including electronic health records, laboratories, imaging, pharmacies, and other vendors and partners.

Creating a standard operating method with other software vendors through FHIR and HL7 interoperability enables healthcare organizations to break free from data silos and the limitations of legacy systems. An interoperable design ensures that healthcare system integration efforts remain scalable and future-ready, enabling the addition of new systems and partners without extensive reengineering.

3. Scalable Data Pipelines

Healthcare platforms generate and consume massive volumes of structured and unstructured data, including clinical notes and diagnostic results, device telemetry, and patient-generated health data. Scalable data pipelines are essential for managing this growth while maintaining data integrity, availability, and performance.

Scalable ingestion, processing, and storage mechanisms that can handle varying workloads without degrading service are essential to modern healthcare software architectures. These pipelines ensure secure data flows between linked systems and applications while supporting analytics, reporting, and clinical insights. applications.

4. High Performance and Reliability

Performance is crucial for all healthcare systems, as patient care outcomes can be affected by service interruptions or volatility. Healthcare software must deliver continuous responsiveness, be scalable to support multiple concurrent users, and be available during periods of high utilization.

Performance-focused designs prioritize efficient data access, optimized APIs, fault tolerance, and robust infrastructure design. This guarantees that, as system complexity and usage continue to rise, clinicians, administrators, and patients can rely on healthcare applications for prompt access to vital information.

5. Compliance by Design

Instead of addressing regulatory requirements through manual workflows or post-deployment audits, healthcare software teams that adopt a compliance-by-design approach integrate them directly into the architecture. For healthcare platforms, this includes building HIPAA-compliant software that enforces privacy, access control, auditability, and data protection across all components.

Healthcare software developers can reduce risk and make compliance easier by integrating compliance into their products’ workflows, access methods, and database management processes. Through Compliance by Design, these products can continue to evolve with changes in compliance requirements without requiring substantial architectural changes.

A Security-First Approach to Healthcare Software Development

Ransomware attacks, credential abuse, and data leakage across interconnected systems are just a few of the many security risks that healthcare businesses must address. Healthcare software must adhere to a well-organized, defense-in-depth strategy to safeguard patient data and maintain regulatory compliance. A workable approach for enhancing healthcare data security while promoting interoperability and operational resilience is outlined in the ten steps that follow.

1. Apply HIPAA Technical Safeguards by Design

To comply with HIPAA Technical Safeguards, modern healthcare platforms should integrate technical safeguards for access control, authentication, system activity logging, and protection of electronic transmission into their system architecture. Hence, these protections work the same way across all applications and integration components, and there is no need for manual interfacing with them.

2. Encrypt Data at Rest and in Transit

To protect sensitive health information, robust encryption rules must be used. To ensure confidentiality and integrity, data stored in databases, file systems, and backups should be encrypted at rest using AES-256, and healthcare data transmitted between systems should be protected in transit using TLS.

3. Implement Role-Based Access Control

Role-based access control ensures that users can access only the data required for their responsibilities. Clinical staff, administrators, and support teams should have clearly defined permissions that enforce the principle of least privilege across all healthcare systems.

4. Enforce Multi-Factor Authentication

Multi-factor authentication (MFA) helps confirm a user’s identity by requiring more than just a password. This change significantly reduces the likelihood of unauthorized access, even if passwords are stolen, across healthcare systems and applications spread across multiple systems.

5. Centralize Identity and Access Management

Identity and access management (IAM) systems unify authentication, authorization, and user lifecycle management across healthcare platforms. IAM ensures that users, applications, and devices are granted the right level of access at the right time and for the right purpose.

6. Maintain Audit Trails and Activity Logging