For many organizations, the imperative for modernization arises when legacy enterprise apps become rigid, making incremental changes in high-effort and error-prone. These systems often operate as tightly coupled, monolithic units where shared codebases and data stores stifle the agility required for rapid feature deployment or granular scaling.

As the product footprint expands, this architecture inevitably creates bottlenecks—slowing release velocities, heightening regression risks, and preventing the isolation of failures or the precise scaling of resources to match real-world demand. To address this, organizations are adopting a structured technical migration strategy centered on microservices. This involves systematically decomposing the application into smaller, independently deployable services that align precisely with business domains.

This strategic shift brings greater predictability to system evolution and enhances operational resilience. Ultimately, it establishes a modernized delivery model where teams can continuously evolve and scale the platform independently, effectively moving away from the constraints of large, high-risk release cycles.

Monolith vs Microservices: What Changes Technically

In a monolithic architecture, all parts of the application live in a single codebase and run on the same database and deployment pipeline. This works well in the beginning because development feels straightforward and easier to coordinate. Still, as the application grows and more features are added, the tight coupling and shared dependencies start to reduce flexibility and make changes harder to manage at scale.

With microservices, the application is divided into smaller, domain-aligned services that run independently and interact through APIs or messaging channels. This allows teams to scale specific components, release changes more frequently, and manage functionality in a more modular way. However, it also adds new operational and architectural responsibilities that must be handled through stronger governance and DevOps maturity.

Monolith vs Microservices: Technical Differences

| Dimension | Monolithic Architecture | Microservices Architecture |

|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

When Migration Makes Business and Engineering Sense

This stage is not about adopting microservices for trend or technology alignment; rather, it is about recognizing the point at which a monolithic architecture begins to restrict both engineering productivity and business growth.

The necessity for a technical migration becomes apparent when the monolith starts hindering delivery speed and operational stability. At this juncture, the legacy structure makes independent scaling difficult and transforms routine operations—such as feature releases or incident management—into slow, high-risk processes that require a more decoupled, service-oriented approach.

- Performance and scalability constraints

- Specific workloads are challenging to scale independently

- Infrastructure costs rise because the entire application must scale together

- Latency or reliability issues increase as usage grows

- Slow and risky release cycles

- Every change requires broad regression testing

- Deployment cycles become harder to coordinate across teams

- A single failure can impact the entire application

Recommended Migration Approach for Legacy Enterprise Apps

A successful move from a monolithic legacy enterprise application to microservices is best achieved through a gradual, careful, and risk-conscious delivery approach. This methodology is significantly more effective than attempting a high-risk, “big bang” one-time transformation.

In most enterprise environments, this migration is carried out using a staged approach. This strategy allows modernization to progress incrementally, ensuring the core legacy enterprise application remains stable and continues to operate reliably throughout the entire transition.

-

Strangler Fig Pattern (Primary Approach)

To reduce migration risk and maintain stability, gradually lower the monolith size. Route traffic through a gateway during the transition. Extract modules into separate services.

-

Modular Monolith as an Interim Step

Before extracting services, the monolith is first divided into separate domains with clear ownership boundaries. This simplifies the codebase, reduces dependency overlap, and makes the decomposition process more organized and easier to manage.

-

When Full Rebuild is Justified

A complete rebuild allows the architecture to be redesigned from the ground up. Still, it also increases costs, delivery risks, and dependence on specific skill sets, which is why it is usually considered only when the system is very outdated or tightly coupled.

Technical Migration Roadmap

A successful shift from monolith to microservices requires a clear, step-by-step plan that introduces changes gradually, keeping the application stable and the user experience intact. The aim is not just to break apart services, but also to ensure that each stage of this process is technically sound and operationally safe.

-

Step 1: Discovery and Dependency Mapping

The process begins with a close look at the codebase, database structures, and external integrations. This helps identify how modules are connected, where dependencies overlap, and if any hidden interactions might affect the migration.

-

Step 2: Identify the First Extractable Domain

The initial service is selected from a domain with high isolation and low business risk, allowing the migration work to be confirmed without disrupting core system operations.

-

Step 3: Build the Independent Service and API Layer

The selected feature is detached from the monolith and created as a separate service with its own API interface. The traffic is thus gradually switched to the new service, ensuring backward compatibility and keeping the user experience (UX) unchanged during the transition.

-

Step 4: Gradual Traffic Shift and Validation

Traffic is moved to the new service in stages, where the process is supported by monitoring, logging, and error handling to track performance and stability.

-

Step 5: Phase Out Monolith Components Safely

Once the service runs reliably in production, the related functionality is removed from the monolith. The process continues iteratively across other domains until the monolith’s presence is reduced.

This structured roadmap ensures that modernization progresses incrementally, reducing operational risk while enabling the platform to evolve in a predictable, controlled manner.

Common Migration Challenges and How to Avoid Them

During the journey of application modernization, one of the most frequent problems is excessive service breakdown, which fragments the system and increases management complexity. Furthermore, latency and integration overhead can escalate if services rely on frequent synchronous calls or poorly designed interfaces.

Beyond these technical hurdles, concerns often arise regarding skill gaps and operational complexity, as microservices demand significantly higher DevOps maturity, automation, and experience with distributed systems. Finally, there is the persistent risk of governance and architectural drift, which can occur when different teams implement services variably or inadvertently duplicate functionality across domains.

Why Modern Enterprises Outgrow Monolithic Architectures

Updating an old monolithic application is not just a technical job. It is also a strategic step to build a more scalable, resilient, and flexible architecture. A gradual migration to a microservices architecture can reduce delivery risk and increase the ability to deploy and align IT investments with the business’s dynamic needs.

Telliant Systems assists businesses throughout the migration process, from architecture assessment to breaking down services into phases and providing implementation support. With the proper roadmap and governance discipline, organizations can modernize confidently while maintaining platform stability and performance.

Many companies today work with external software development partners to speed up product delivery and strengthen their engineering capacity. But in practice, not every partnership works smoothly. Teams often face budget overruns, slow progress, and misaligned expectations because the partner they chose was not the right fit for their product or way of working.

Are you evaluating potential vendors or preparing to work with a product development partner for your next project? Use this article as a decision guide to assess technical skills, delivery readiness, governance practices, and collaboration ability so you can choose a partner who improves product quality and supports long-term business growth.

Why the Right Partner Matters

Choosing a software product development company is more than a business choice. It influences the quality of what you build, how smoothly the work moves forward, and how confidently your team delivers. The right partner is more than a team that writes code or completes tasks. They help you define the product vision, make better technical decisions, eliminate delivery obstacles, and boost your team’s confidence in taking an idea from concept to launch with greater ease and consistency.

The wrong partner can slow progress and increase risk, stretching budgets beyond the original plan. Timelines slip, communication becomes unclear, and teams spend more time fixing problems than developing valuable features. Rework increases, technical issues accumulate over time, and managing future product growth becomes more difficult.

This is why a transparent, organized evaluation process is crucial. When you choose a partner based on alignment, skill, and readiness to deliver, you build a stronger foundation for your product’s growth.

Step 1: Define Your Project and Business Requirements

The selection process should begin with precise internal alignment on objectives and expectations.

Organizations should document:

- Business goals and success metrics

- Target users and value propositions

- Core features and functional scope

- Budget, schedule, and risk constraints

- Compliance or regulatory considerations

When the scope is clear, it becomes easier to evaluate vendors, and both sides share the same understanding of what needs to be done during discussions, proposals, and planning.

Step 2: Evaluate Technical Competence and Relevant Experience

A good development partner offers more than just technical skills. They know how products are built and tested in actual project environments. When looking for vendors, consider how well they fit with your technology stack, whether they have experience with similar products, and how they have handled performance, scalability, and security in real-world use cases.

It is also helpful to look at the kind of complexity and scale they have handled in past projects, since it gives a clearer idea of how well they can manage complex or demanding delivery situations.

Industry experience matters as well, especially in regulated or data-sensitive fields. Teams that already understand these constraints are better prepared to design systems that are reliable, compliant, and scalable over time.

Step 3: Look at What They Have Delivered Before

When choosing a development partner, it is not enough to look at their tech stack or skill set. What really makes a difference is seeing how they have performed in real projects, how their work holds up in production, and whether they have experience solving problems similar to yours.

A capable partner understands your technology stack, has experience working on similar solutions, and knows how to build performance, scalability, and security.

Key things to check:

- Relevant tech stack fit

- Similar product experience

- Performance and scalability approach

- Security implementation practice

- Past project complexity

A partner with real-world delivery experience and substantial technical depth is more likely to handle challenges smoothly, reduce execution risks, and add real value to the product rather than just completing assigned development tasks.

Step 4: Define the Right Engagement Model for Your Product

Understanding the engagement model and collaboration structure helps you see how the partnership will actually function in day-to-day work. It clarifies ownership, decision-making responsibilities, communication flow, and how both teams coordinate across planning, development, and delivery activities. This ensures expectations are aligned before the project begins.

The most common approaches include:

- End-to-end project delivery

- Dedicated product teams operating in long-term engagement

- Skill-based augmentation to extend internal capabilities

The appropriate model depends on internal engineering maturity, roadmap scope, and expectations regarding control, autonomy, and delivery of accountability.

Step 5: Evaluate Communication and Working Culture

When evaluating a partner, assess their responsiveness, communication clarity, and consistency in sharing progress updates and project status. Their approach to planning work, conducting reviews, and coordinating with different teams is also a good sign of future collaboration success. Cultural alignment is essential as well.

When both teams have similar working styles and collaboration practices, it is easier to prevent friction and coordination issues during delivery. Clear and regular communication reduces the risk of rework, improves team collaboration, and enables quicker, more confident decision-making among stakeholders.

Step 6: Evaluate Testing Quality, Security, and Controls

A reliable development partner follows strict engineering, testing, and security practices throughout the product lifecycle. By reviewing their QA, security, and governance strategies, you can see how they ensure product reliability, operational stability, and risk management throughout development and delivery.

Key areas to review include:

- How is testing planned and automated?

- How securely is the product developed and how data is protected?

- How are releases and deployments managed?

- What intellectual property and confidential information are safeguarded?

They ensure consistent delivery across releases and reduce operational risks and security issues.

Step 7: Review Pricing Model and Contract Terms

Before signing an agreement with a development partner, evaluate the pricing structure and legal terms that define the engagement. A clear definition of scope, costs, and accountability helps create a predictable working relationship.

Gaining this clarity ahead of time improves cost accountability and lowers delivery risk during execution. When pricing is unclear or deliverables are not well-defined, it can lead to conflicts later, cost overruns, and confusion about ownership and responsibility.

Step 8: Validate Post-Launch Support and Product Evolution Capability

A product does not end at launch. It requires maintenance, performance monitoring, and ongoing enhancements as user needs and business priorities evolve. While evaluating a partner, assess their ability to support updates, handle issues, collaborate on roadmap improvements, and maintain continuity across future releases and product iterations.

Red Flags That May Increase Delivery Risk

-

Experience

A partner with limited or hard-to-verify project history may lack sufficient real-world delivery experience to handle complex product requirements.

-

Communication

Slow responses, unclear updates, or irregular coordination can create gaps in understanding and eventually slow down execution.

-

Pricing

Unclear pricing models and loosely defined scope often result in unexpected costs, disagreements, and budget stretch during the project.

-

Quality

Weak or informal QA and testing practices increase the chances of defects, rework, and unstable builds reaching production.

-

Security

Poor security awareness or unclear data handling practices can lead to compliance issues and increase operational risk.

-

Accountability

Hesitation to provide references, ownership clarity, or delivery commitments may signal reliability and trust issues.

Moving Forward with the Right Development Partner

Selecting the right software product development partner is a strategic decision that affects delivery reliability, engineering quality, and long-term product growth. The decision involves much more than comparing price quotes or checking which tools a team works with. What matters is whether a partner has a proven delivery track record, a compatible way of working, strong communication habits, and a disciplined approach to quality, security, and governance.

Evaluating partners with this level of careful assessment helps reduce delivery risks and leads to more consistent outcomes. Teams that value capability, transparency, and collaboration are better prepared to build scalable, evolving products.

This is where a partner like Telliant Systems can add meaningful value through disciplined engineering practices, strong delivery governance, and a long-term product partnership mindset. With the right partner in place, software development becomes more than a service relationship. It becomes an enabler of sustainable growth and innovation.

Choosing the proper mobile app development framework is essential because it affects your app’s performance, development speed, and future maintenance. Statista reports that 46 percent of software developers worldwide used Flutter in 2023. This makes it the most popular cross-platform framework. In comparison, React Native was used by 35 percent of developers globally, showing its ongoing significance in mobile development.

Native development remains the preferred choice for performance-critical, deeply integrated applications because it provides direct access to platform capabilities.

Cross-platform frameworks like Flutter and React Native offer clear benefits in time-to-market and code reuse. This blog will help you decide when to choose Native, Flutter, or React Native based on your project needs, performance goals, and long-term plans.

Understanding the Three Mobile Development Approaches

-

Native Development

Native development involves building separate apps for Android and iOS using platform-specific languages such as Kotlin or Java for Android and Swift for iOS. It offers the best performance, full access to device hardware and APIs, and highly optimized platform-specific user experiences. However, it requires two codebases, larger teams, and higher development and maintenance effort.

-

Flutter

Flutter is a cross-platform framework developed by Google that uses a single Dart-based codebase to build apps for both Android and iOS. It compiles native machine code and uses a widget-driven UI model, enabling fast performance and consistent design across platforms. Flutter accelerates development speed but requires teams to work within its framework-driven UI ecosystem.

-

React Native

React Native is a JavaScript framework that allows teams to build mobile apps using concepts of React. The UI components rendered are native. This framework is an excellent fit for organizations with web or JavaScript development teams. Sharing the development process allows for faster prototyping and quicker feature rollout. How frequently an app interacts with native modules and device characteristics determines how well it performs.

| Criteria | Native | Flutter | React Native |

|---|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Key Evaluation Criteria for Selecting the Right Framework

-

Application Performance and Responsiveness

Performance is an essential factor when looking at mobile frameworks. Native apps usually offer the best performance because they work directly with platform APIs and hardware. This makes them ideal for applications that need complex animations, real-time data processing, streaming, graphics rendering, or features that require significant hardware support.

Flutter offers performance that is close to Native in most scenarios because it compiles native ARM code and does not rely on web views. React Native works well for regular UI interactions.

Performance may slow down when an app frequently switches between JavaScript and native modules, so teams should assess performance needs based on the workload complexity and how users are expected to interact with the app.

-

Speed and Release Velocity

The more code that can be reused across platforms, the higher the overall delivery efficiency. Faster development cycles are made possible by Flutter and React Native’s shared codebases, hot reload, and reusable user interface elements. They are therefore helpful for MVPs, iterative feature releases, and apps that must be released concurrently on iOS and Android.

Native development generally involves longer timelines because both platforms require independent engineering effort. It functions well in scenarios that need greater control over system behavior, platform optimization, and long-term stability. Teams should decide whether better platform control or a quicker time-to-market adds more strategic value to the product.

-

Design Consistency and Interaction Experience

User experience expectations dictate the selection of the framework. Native apps offer the most genuine platform behavior, uniform gestures, and compliance with operating system design standards.

Flutter enables a visually consistent UI across platforms while offering great customization flexibility, which is valuable for branded digital experiences. React Native delivers a near-native feel but may rely on third-party libraries for advanced UI components.

The decision should take into account the app’s design goals, the kind of brand experience you want to deliver, and how closely the UX needs to align with each platform’s native behavior.

-

Device Interfaces and System Interoperability

Some applications depend heavily on device hardware or system-level integrations. Native frameworks provide the most direct access to sensors, Bluetooth, GPS, biometric authentication, camera processing, and offline storage.

Although Flutter and React Native support native API integration, certain advanced features may require creating new platform code or using third-party plugins.

It raises the app’s complication level to interact with device functionalities in a specialized way. The mobile app development teams should carefully examine integration depth, dependency risk, and future extensibility before deciding on a final framework.

-

Maintainability, Scalability, and Long-Term Sustainability

Initial development speed is essential, but so is a long-term effort. The lifecycle costs of native projects may rise due to the need for distinct maintenance cycles and platform-specific expertise. Although teams must stay up to date with framework releases, Flutter uses a single codebase to reduce maintenance costs.

React Native benefits from a large developer ecosystem, though dependency management and version compatibility must be monitored closely. Technical debt can be decreased by selecting a framework that supports long-term product growth.

When to Choose Each Framework

-

Use Native for

Best applications that need high performance, strong security, deep hardware access, and optimization for specific platforms with long-term scalability.

-

Use Flutter for

Ideal for fast cross-platform development, visually rich and consistent UI, frequent feature updates, and accelerated release cycles using a single codebase.

-

Use React Native for

Best suited for rapid Minimum Viable Product: (MVPs), faster iteration cycles, teams that already work with JavaScript, and products that need a quicker launch instead of deep platform-specific customization.

Long-Term Cost and Maintenance Implications

There is much more to choose a mobile app development framework than how fast it can be built or how much the first release costs. The real impact becomes clear in the long run through maintenance needs, scalability challenges, team workload, and the smoothness of the product’s growth. The goal is to choose an approach that stays manageable as the application expands.

What Really Drives Long-Term Cost

- Most costs arise after launching because of upgrades, improvements, security fixes, and compatibility updates.

- Native apps need separate maintenance for Android and iOS. This increases long-term work but provides better control over the platform.

- Flutter and React Native reduce maintenance by using a shared codebase, but you might need to handle framework updates and plugin compatibility.

- The best option depends on whether the focus is on tighter platform control or less ongoing maintenance work.

Decision Framework – How to Evaluate Your Use Case

Before finalizing a mobile framework, teams should assess the application’s performance impact. They need to decide whether to prioritize a quicker launch or a more stable release. Additionally, they should determine how much control over the platform the product needs.

It is also essential to consider whether the UI should feel like it fits the platform or remain consistent across devices. Assess whether the current team has the necessary skills or if new hires are needed. Also, think about how often new features and updates will be released after launch and how much long-term maintenance the team can realistically handle.

Making the Right Framework Decision for Your App

There isn’t just one “best” framework for mobile development. Performance requirements, release priorities, integration depth, and long-term scalability all influence the best option.

Applications that need excellent performance, robust security, and hardware control are best suited for native development. Flutter enables developing products that require regular updates from a single codebase, producing aesthetically pleasing interfaces, and swiftly delivering cross-platform solutions. React Native might be a fantastic choice for JavaScript-savvy teams that want to produce MVPs and products and deploy more rapidly.

Instead of choosing a framework based on popularity or developer preferences, teams need to figure out their use case, plans, maintenance, and resources. A well-aligned technology choice not only improves delivery efficiency but also ensures that the application remains scalable, maintainable, and future-ready as it grows.

Many enterprises continue to rely on legacy applications developed years or even decades ago to support essential business operations such as finance, supply chain management, customer service, and internal workflows. These systems were originally built using monolithic architectures and older technology stacks that were suitable at the time but are now difficult to maintain, scale, and integrate with modern digital platforms.

As organizations adopt technologies such as cloud computing, artificial intelligence, automation, and advanced analytics, legacy systems often become barriers to innovation. The limited flexibility and integration capabilities of the systems hinder the development process and the introduction of new digital services.

Additionally, the technical debt accumulated over the years, including patches and modifications, makes the system expensive and risky to operate. For these reasons, legacy application modernization services have become a strategic priority for enterprises seeking scalable systems, improved agility, and stronger digital capabilities.

What Legacy Application Modernization Services Include

The services offered in legacy application modernization include various engineering and consulting activities geared toward modernizing the legacy application. The services offered include various aspects of the legacy application, including application architecture, infrastructure, data management, security, and integration.

A typical legacy application modernization company provides the following capabilities.

-

Legacy System Assessment and Technical Debt Analysis

Modernization begins with evaluating legacy applications, including architecture, code quality, dependencies, and integrations. This assessment identifies technical debt, security risks, and performance issues, helping organizations prioritize systems that require immediate modernization.

-

Application Replatforming

Application cloud replatforming is the migration of legacy applications from their current environment to another, such as the cloud or containers. This migration allows scalability and operational benefits with a minor code change.

-

Application Refactoring

Application refactoring is the restructuring of legacy code to enhance maintainability, modularity, and performance. The code is optimized, APIs are introduced, and automated testing and integration are implemented without changing the application’s functional logic.

-

Application Reengineering

Application reengineering refers to the redesign of existing applications using modern technologies such as microservices and service-oriented architecture. Application reengineering involves redeveloping code to build scalable applications.

-

Data Modernization

Data modernization involves modernizing existing databases through data migration, improved database structure, and enhanced data quality. Data modernization allows for integration with new data platforms, analytics systems, and cloud computing systems. It offers advanced reporting and analysis.

-

API Enablement and Integration

API enablement is the implementation of standardized interfaces that allow existing applications to communicate with modern applications. The use of APIs allows for integration with cloud platforms, mobile applications, and other systems.

Common Legacy Modernization Approaches

Enterprises typically adopt structured modernization strategies depending on the condition of their legacy systems, the importance of the application, and long-term digital transformation objectives. Different approaches require varying levels of development effort and present different levels of operational risk.

The following table outlines the most used modernization strategies.

Comparison of Legacy Application Modernization Approaches

| Modernization Approach | Description | Level of Code Change | Business Impact |

|---|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Selecting the appropriate modernization strategy depends on factors such as application complexity, business criticality, modernization budget, and long-term technology roadmaps.

Key Factors Enterprises Should Consider When Choosing a Partner

Choosing a reliable legacy application for a modernization partner requires careful evaluation of technical capabilities, modernization methodologies, and industry experience.

-

Expertise in Legacy Technologies

Experience with legacy languages and platforms, including COBOL, mainframes, early Java, and legacy .NET systems.

-

Cloud and Architecture Expertise

Ability to design cloud architectures, implement microservices, and use containers to modernize monolithic applications.

-

Structured Modernization Methodologies

Use of proven frameworks that guide modernization from assessment to deployment with standardized engineering processes.

-

Data Migration and Integration Capabilities

Expertise in securely migrating legacy data, validating accuracy, and integrating modernized systems with enterprise platforms.

-

Security and Compliance Expertise

Ability to implement secure development practices and ensure modernization meets industry security and regulatory compliance standards.

Questions Enterprises Should Ask Modernization Vendors

When evaluating a software modernization partner, enterprises should ask targeted questions to understand the vendor’s technical expertise and modernization approach. Key questions include:

- What methodology do you use to assess legacy systems and define modernization priorities?

- What experience do you have with similar legacy technologies, architectures, or industry environments?

- How do you minimize operational disruption during the modernization process?

- What approach do you follow for data migration, validation, and system integration?

- How do you measure the success and business outcomes of modernization initiatives?

These questions help enterprises identify vendors that have the technical expertise and structured processes required to manage complex legacy modernization programs.

Top Companies Providing Legacy Application Modernization Services

Several technology firms provide legacy application modernization services to help enterprises transform outdated systems, reduce technical debt, and adopt modern cloud and microservices architectures. These companies combine consulting expertise with engineering capabilities to support complex modernization initiatives.

Telliant Systems

-

Services

Telliant Systems provides legacy application modernization, application refactoring, platform migration, API enablement, and system integration services to help enterprises modernize critical legacy systems and improve scalability.

-

Strengths

Telliant has strong engineering expertise in transforming legacy applications while maintaining business continuity and improving performance, scalability, and integration with modern enterprise platforms.

Highlights

Telliant focuses on structured modernization strategies that enable organizations to transition legacy systems to modern architectures with minimal disruption and improved operational efficiency.

Accenture

Accenture provides enterprise application modernization services that help organizations migrate legacy systems to cloud platforms and adopt modern architectures. Its services include mainframe modernization, application reengineering, and integration of legacy systems with modern digital platforms.

IBM Consulting

IBM Consulting specializes in modernizing legacy and mainframe systems using hybrid cloud architectures. The company uses advanced tools for code analysis, workload migration, and application transformation to help enterprises improve system flexibility and performance.

Cognizant

Cognizant helps clients modernize their legacy systems through refactoring, cloud migration, and system integration. The company is committed to helping clients transform their legacy into scalable digital platforms for modern enterprise operating models.

Capgemini

Capgemini helps enterprises modernize legacy systems by implementing cloud migration, API integration, and DevOps practices. Its modernization services focus on improving application performance, scalability, and integration with modern platforms.

Infosys

Infosys provides application modernization services that help organizations transform legacy systems into cloud-enabled platforms. The company uses modernization frameworks and cloud technologies to improve system efficiency and support digital transformation initiatives.

Tata Consultancy Services (TCS)

TCS provides large-scale legacy modernization services, including application refactoring, platform transformation, and cloud migration. The company helps enterprises improve operational efficiency as they transition legacy systems into modern architecture.

| Company | Key Modernization Focus | Notable Capabilities |

|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

How Modernization Impacts Business Outcomes

Legacy system modernization delivers quantified benefits, including increased operational efficiency, greater technology flexibility, and improved business performance. Modernizing the system enables faster software development, allowing the engineering team to implement continuous integration and deployment.

Modernization makes the system reliable and scalable through cloud-native technology, enabling it to scale based on demand and automatically improving integration capabilities. Modernized applications expose standardized APIs that allow organizations to connect internal systems, partner platforms, and customer-facing applications more effectively.

Reducing technical debt also improves developer productivity. Engineering teams spend less time maintaining outdated codebases and more time developing innovative products and services.

Why Enterprises Choose Specialized Modernization Partners

Many enterprises initially attempt to modernize legacy systems internally, but the complexity of legacy architectures, dependencies, and data structures often make these initiatives difficult to manage without specialized expertise.

Having a dedicated Legacy app modernization company provides organizations with experience from diverse transformation projects across industries, helping them spot issues early and apply transformation strategies. Through comprehensive Legacy app modernization services, these partners also use automation tools and migration accelerators that reduce development effort and shorten project timelines.

They provide engineers with experience in legacy and latest technologies, which is essential for precise system transformation and helps organizations implement their modernization efforts efficiently and align with long-term digital transformation strategies.

Conclusion: Choosing the Right Partner for Legacy Modernization

Legacy system modernization has emerged as a key business strategy for organizations to ensure competitiveness in highly dynamic technological environments. Modernizing legacy applications helps organizations reduce technical debt, improve scalability, and enable integration with modern digital platforms. However, modernization initiatives involve complex system dependencies, large volumes of data, and mission-critical business processes that require careful planning and technical expertise.

Organizations must assess their vendors’ experience in legacy systems, cloud technology, data migration, and modernization methodologies. With the right partner, organizations can transform legacy systems into scalable digital platforms that support innovation, operational efficiency, and long-term business growth.

Healthcare organizations manage large amounts of clinical data, but there are inconsistencies in diagnoses across departments, unstructured problem lists, and reporting systems that use codes not designed for clinical use. Addressing this gap means effective SNOMED CT implementation to modernize healthcare terminology.

With the structured integration of SNOMED CT, organizations can capture exact, computable clinical meaning, enabling better EHR interoperability, enhanced healthcare data standardization, and scalable analytics. As a result, the implementation of SNOMED CT provides a strategic foundation for modern, interoperable digital healthcare systems.

1. The Terminology Crisis in Modern Healthcare

Clinical terminology standards constitute a complex environment in which healthcare organizations operate. Laboratory databases, analytics tools, billing systems, and electronic health records (EHRs) commonly use separate vocabularies created for different reasons. Even though each system may operate independently, they rarely coincide conceptually.

Several systemic issues contribute to this crisis:

- Local or proprietary code sets embedded within EHR platforms

- Manual cross-mapping between terminology systems

- Inconsistent adoption of standardized vocabulary across departments

A lack of semantic consistency in healthcare data results in decreased interoperability of EHRs, inaccuracies in reporting, increased costs of normalization, and reduced automation.

2. What Is SNOMED CT?

SNOMED CT is one of the most comprehensive clinical terminology systems in global healthcare. It is maintained by SNOMED International and is designed to represent clinical meaning with precision. Unlike statistical classifications, which group diseases for reporting purposes, SNOMED CT is an ontology-based terminology that allows the structured representation of clinical concepts.

The architecture is composed of three main pillars:

- Concepts that stand for distinctive and distinguishable clinical meanings

- Descriptions that give each concept’s human-readable terminology

- Relationships establish rational links between similar clinical ideas

This poly-hierarchical structure allows a single concept to exist under multiple parent categories. For example, a chronic condition may be categorized under both metabolic disorders and long-term diseases, enabling semantic reasoning and machine-readable logic across clinical systems.

Cohort identification, decision-support engines, organized clinical documentation, advanced analytics, and uniform terminology alignment across EHR systems are all enabled by SNOMED CT. The integration of SNOMED CT is intended for clinical, not billing purposes, and has enhanced healthcare data standardization.

3. SNOMED CT vs ICD-10: Understanding the Difference

Healthcare administrators may consider comparing ICD-10 vs SNOMED CT when analyzing modernization options. Even though both are structured coding systems, they have different applications in the healthcare setting.

ICD-10 is a statistical classification system that is intended for morbidity surveillance, claim processing, and public health reporting, and it also classifies diseases into structured categories for claims processing and epidemiologic analysis. SNOMED CT is intended to represent clinical meaning in patient care.

The structural differences are significant:

| Dimension | SNOMED CT | ICD-10 |

|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Since modern healthcare software development uses SNOMED CT integration to gather precise clinical data, which is subsequently translated into ICD-10 codes for billing, it is essential to know the differences between ICD-10 and SNOMED CT to integrate SNOMED CT effectively.

4. Why SNOMED CT Matters for EHR Modernization

Integration with SNOMED CT is a key enabler of EHR interoperability, ensuring consistent identifiers for clinical concepts across systems. The adoption of FHIR, the API, and SNOMED CT together supports structured terminology and binds the APIs and clinical workflows for data exchange. For true interoperability of EHRs, there must be shared meaning, not just data transport.

From an analytics standpoint, SNOMED CT implementation enhances predictive modeling, population stratification, quality reporting, and the development of artificial intelligence models. The inclusion of structured SNOMED CT in EHRs changes the EHR from a passive documentation tool to a strategic infrastructure that is analytics-driven and supports scalable EHR interoperability.

5. Benefits of Terminology Modernization

Healthcare terminology modernization offers measurable enterprise value. The adoption of SNOMED CT improves the semantic basis necessary for a successful digital transformation.

| Category | Benefit |

|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

SNOMED CT integration ensures that clinical terminology standards support innovation. When documentation is structured and computable, healthcare organizations benefit from automation, clarity, and efficiency.

6. Common Implementation Challenges

- The limitations of legacy systems often necessitate architectural redesign to support SNOMED CT terminology services. Many older EHR platforms lack native support for structured terminology servers, scalable APIs, and standards-based interoperability frameworks.

- Mapping complexity is one of the issues that arise when implementing SNOMED CT. The alignment of SNOMED CT and ICD-10 requires strict governance, validation, and domain expertise, as crosswalk tools have accelerated mapping but still require clinical validation to maintain semantic integrity.

- The adoption of clinician workflow functionality is an important factor in the long-term success of SNOMED CT integration. A poorly designed implementation can slow down documentation and even lead to provider resistance.

- Governance and version control require ongoing organizational focus and well-understood ownership models. There are version alignment issues, extension modules, mapping changes, and structured change management processes to coordinate.

7. Best Practices for SNOMED CT Integration

- For the successful implementation of SNOMED CT, a roadmap is needed that covers governance, architecture, usability, EHR interoperability, healthcare data standardization, scalability, and sustainability.

- There needs to be terminology governance, led by a cross-functional team that includes representatives from clinical informatics, Health Information Management professionals, data architects, compliance leaders, and executive sponsors.

- Establish an enterprise-wide terminology service to support the integration of FHIR and SNOMED CT within a scalable and standards-compliant infrastructure.

- Broaden the use of the terminology service beyond problem lists and clinical findings to encompass the entire organization, in phases.

- Closely track ICD-10-to-SNOMED CT mappings to ensure billing alignment, reporting accuracy, and regulatory compliance.

Conclusion

For healthcare organizations to fully realize digital transformation, the challenge of terminology gaps must be resolved. Inconsistent terminology and documentation have been a barrier to the interoperability, analytics, and standardization of healthcare data in EHR systems. The implementation of SNOMED CT provides a systematic approach to addressing terminology gaps in the healthcare industry by translating clinical documentation into accurate, computable data.

SNOMED CT provides a common clinical vocabulary across the healthcare industry to ensure alignment of clinical semantics and improve governance. SNOMED CT plays a critical role in supporting digital transformation as healthcare advances toward value-based care and the adoption of artificial intelligence.

A patient of mine just shared an inspiring story with me. A state-of-the-art patient management system for managing patients was built by their development team. On the other hand, complicated form layouts, slow page loads, and interface components caused the nursing staff to spend extra time on each patient’s input. Every day, hundreds of patients have their minutes multiplied by a large number. Those minutes are multiplied across hundreds of patients daily. The features were all there, but the user experience was creating silent productivity drains.

This is the reality of managing UX debt. You can build functionalities, but the cumulative effect of minor frustrations can create a tax on your users’ time and patience.

Now comes the question: should you keep adding features to your program, or should you tackle the increasing UX debt that is reducing its value? This isn’t just a matter of aesthetics; it’s a strategic choice that will affect how happy your users are and how well your business runs.

Let’s examine what UX debt really means and how to manage it effectively through technical UX improvement strategies.

What Exactly Is UX Debt?

UX debt is the accumulated cost of all user experience compromises, shortcuts, and delayed improvements in your software. Interest is added to it, just like technical debt [AV1.1]may be. On their own, inaccuracies don’t seem like much. They cause frustration and reduced productivity by disrupting the user experience. The impact can be measured. You get $100 in value for every dollar you invest in user experience.

You may be working with cutting-edge technologies like Vue.js frameworks and React components, but without clear standards, you’re introducing friction at every interaction. As a result, improving software’s user experience is no longer a one-and-done task, but rather a continual discipline.

UX debt manifests in several key areas

-

Inconsistent interface patterns

Interface elements without consistent patterns creates visual inconsistencies. Screens will have varied button colors and padding, and the text will be unorganized. Icons will also provide conflicting messages. Users who expend mental resources will be solving the interface rather than on tasks.

-

Performance degradation

Performance degradation normally occurs when page loads slow down, animations stutter, or forms take longer to submit. According to Google research, as page load time increases from 1 second to 3 seconds, the bounce rate increases by 32%. This isn’t just about user patience; it’s about lost productivity.

-

Accessibility gaps

Among UX debts, accessibility gaps are among the most important. Software that doesn’t follow WCAG standards prevents people who use keyboard shortcuts, screen readers, or other assistive technology from using it. Many organizations fail to prioritize accessibility, despite the Web Content Accessibility Guidelines offering explicit guidance.

How to Identify and Measure Your UX Debt

You can’t manage what you can’t measure. The first step in UX debt management is establishing clear metrics that reveal where friction exists.

-

Start with performance tracking

Tools that measure front-end performance metrics provide user experience data. Track Largest Contentful Paint (LCP) to load primary material in 2.5 seconds.

Monitor FID to keep interface response under 100 ms. Protect loading layout by monitoring Cumulative Layout Shift (CLS). These metrics translate directly to user frustration or satisfaction.

-

Use session replay tools

Session replay tools show you exactly how users interact with your software. You’ll see moments of hesitation, multiple clicks on non-interactive elements, and confusing form fields. This qualitative data reveals what the numbers can’t. It shows the human experience behind the metrics.

-

Review user feedback channels

User feedback channels provide crucial context. Support ticket analysis often reveals patterns. Certain features generate disproportionate complaints, and everyday tasks that users find confusing. When the same issues recur, you’ve identified high-priority UX debt that requires technical UX improvement strategies.

Practical Strategies for Reducing and Preventing UX Debt

UX debt management requires both addressing existing problems and preventing new ones from emerging. Here are proven approaches that deliver results for user experience optimization for software:

-

Use a design system to keep all your information up to date:

Not content to be merely a library of components, this is an active system outlining guidelines for component behavior, color scheme, typography, and spacing. Rather than starting from scratch and introducing inconsistencies, developers can avoid these problems by using the system’s predefined components. Your defense against visual debt is the design system.

-

Integrate accessibility compliance into your development process rather than treating it as an afterthought:

Enable keyboard navigation and ARIA labels in your main components. Combine your continuous integration process with automated user interface and experience testing technologies. One major kind of user experience debt is eradicated before it reaches users when accessibility is made mandatory rather than optional.

-

Establish performance budgets and automatically enforce them:

Set clear limits for bundle sizes, image compression, and API response times to ensure optimal performance. Use tools like Lighthouse CI to block code changes that degrade front-end performance metrics. This shifts performance from a reactive concern to a proactive standard.

-

Ensure UX debt is prioritized alongside feature work by creating a dedicated queue for it:

In order to pay off UX debt, many teams set aside 15–20% of each development cycle. Regular investing helps minor problems from growing into major ones.

When to Choose Different Management Approaches

Your approach to UX debt management should match your organization’s maturity and constraints.

Focus on foundational consistency if:

- Early software product development and pattern-setting

- Design resources are scarce on your team.

- Maintain coherence as you grow rapidly.

- Implementing a basic design framework and setting performance benchmarks is particularly valuable here.

Prioritize accessibility remediation if:

- You handle compliance needs for public-sector and educational institutions.

- Your user base includes people with a wide range of talents.

- Enterprise sales that necessitate accessibility compliance audits are on your horizon.

- In many cases, additional UX problems can be found and resolved through the investment in WCAG compliance.

Adopt comprehensive monitoring and automation if:

- You have an established product with significant existing debt

- Your development team has platform maturity

- You’re scaling across multiple teams or products

- Automated UI/UX testing tools and continuous monitoring help maintain quality at scale.

Building a Sustainable UX Debt Management Practice

Technical solutions only work when supported by organizational commitment. The most effective UX debt management strategies combine tools with Telliant Systems’ team culture.

Establish clear ownership of user experience quality. Whether this responsibility lies with product managers, engineering leads, or dedicated UX roles, someone needs authority to prioritize debt reduction alongside feature development.

Create visibility into UX metrics. Share performance scores, accessibility compliance status, and user satisfaction metrics regularly with leadership. When UX quality becomes a reported metric, it receives appropriate attention and resources.

Foster collaboration between product design and development. Regular design reviews, shared component libraries, and common quality standards help prevent debt from accumulating. When designers and developers share vocabulary and goals, they create better experiences together.

The systematic approach to UX debt management taken by Telliant Systems has helped clients reduce user task completion times by up to 40% through targeted friction reduction using technical UX improvement strategies.

Your Path Forward

The goal isn’t eliminating all UX debt. That’s unrealistic for any actively developed software. The goal is to manage it effectively, so it doesn’t undermine your user experience optimization for software objectives.

-

Start by assessing your current state

Identify one high-friction area using analytics and user feedback. Measure the baseline front-end performance metrics and user satisfaction scores. Implement targeted improvements and measure the impact.

You don’t need to solve everything at once. Sometimes, fixing the five most common user frustrations delivers 80% of the value. Sometimes, laying the groundwork for design system implementation prevents debt. The best approach depends on your users’ needs, your team’s capabilities, and your business goals. UX debt correction should be a purposeful, ongoing exercise, not an occasional cleanup.

If you’re evaluating how to address UX debt in your organization, our team at Telliant Systems has extensive experience helping companies establish sustainable practices. Explore our software product development services to understand how we approach these challenges, or learn more about our user experience design process to see how we build quality into every interaction.

Businesses initially considered Blockchain as the technology that enabled Bitcoin, not as a platform for developing secure, scalable enterprise software solutions. At present, blockchain-based business software plays a central role in enterprise-grade innovation by enabling secure, transparent, and verifiable systems for complex digital operations.

What started as a decentralized ledger for cryptocurrency has matured into a strategic foundation for trust-driven business software and blockchain applications in enterprises. As a result of their pursuit of secure automation, reliable data management, and Blockchain for digital transformation, companies no longer view it as a disruptive experiment but as a viable technology.

The value of Blockchain for business software does not come from market excitement, but from how effectively it strengthens modern software ecosystems.

Key Benefits of Blockchain for Business Software

Blockchain’s value in business software depends on operational security and dependability rather than speculation.

Several fundamental advantages can explain its increasing use.

1. Data Immutability and Auditability

Once data is recorded on a blockchain, it cannot be changed without network approval. This creates tamper-proof records that support compliance, dispute resolution, and regulatory audits.

2. Decentralized Trust

Businesses lessen their reliance on centralized databases, which are open to insider threats, outages, and breaches. System resilience is strengthened by the distribution of trust among several nodes.

3. Process Automation through smart contracts

Smart contracts automate business rules and initiate operations when the criteria are satisfied. This reduces manual intervention, accelerates workflows, and minimizes operational risk.

4. Enhanced Data Transparency

Stakeholders can see shared transactions in real time without disclosing private company information.

5. Security at the architecture level

Blockchain software architecture is designed to provide cryptographic security for transaction validation, access control, and data exchange layers.

Together, these capabilities make blockchain applications in enterprises particularly effective for multi-party business environments where trust, verification, and automation are critical.

Real-World Applications Across Industries

Blockchain is gradually moving out of the realm of pure experiments and test scenarios and is being implemented by companies in their production environments with visible results.

Supply Chain

Blockchain enables complete traceability in production, retail distribution, logistics, and procurement. All transactions are historically recorded and cannot be changed in the past. This ensures product authenticity, reduces counterfeiting, improves recall management, and strengthens supplier accountability.

Healthcare

Reliable sharing of medical data is the most significant use of blockchain technology in enterprises. Healthcare providers, on the other hand, can share patient records, lab reports, and diagnostic images securely without compromising privacy. Also, unchangeable audit trails ensure compliance and prevent unauthorized access to data.

Finance

Insurance claims, trade settlements, loan approvals, and compliance checks are all automated via smart contracts. Blockchain improves transaction integrity in financial operations, speeds up verification, and removes reconciliation errors.

IoT

Billions of connected devices generate data all the time. Blockchain allows secure communication between machines by validating device identities, securing firmware updates, and ensuring the integrity of sensor data.

Identity

Decentralized identity solutions place users in control of their credentials. Instead of multiple centralized identity providers storing sensitive data, Blockchain enables secure identity verification without permanently exposing personal information.

Key Enterprise Use Cases at a Glance

| Industry | Primary Use Case | Business Impact |

|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Integrating Blockchain into Existing Software Systems

One of the most common misconceptions is that Blockchain requires a complete system replacement. In reality, successful blockchain integrations follow a layered and modular approach.

Usually, Blockchain is introduced as a secure transaction layer alongside databases, enterprise systems, and existing applications. Blockchain networks are linked to analytics tools, CRM platforms, and ERP systems via APIs. Smart contracts manage automated business logic, while traditional interfaces handle user experience.

A well-designed Blockchain software architecture includes:

- Hybrid or permissioned networks for business compliance and security

- Integration of legacy authentication systems with identity and access management

- Layers of interoperability for data synchronization with current platforms

- Infrastructure for high-availability nodes with disaster recovery plans

The key to successful software integration is to move away from the idea of switching to new systems and instead enhance existing systems with features such as verifiable trust, automation, and tamper-resistance enabled by blockchain technology.

When Blockchain Makes Sense and When It Does Not

Despite its strength, Blockchain is not appropriate for every workflow or system. Whether its qualities align with particular corporate goals and technical specifications determines its value.

Blockchain is a strong fit when:

- Shared access to reliable and consistent data is necessary for numerous stakeholders.

- Compliance or governance requires data integrity, traceability, and auditability.

- Smart contracts are essential for the automated, rules-based execution of business workflows across organizations.

- Instead of being controlled by a single authority or database owner, trust needs to be distributed.

Blockchain may not be appropriate when:

- One organization owns and controls all system data, making decentralization unnecessary.

- The system must support very high transactions throughout with extremely low latency.

- Data needs frequent updates, deletions, or retroactive changes that conflict with immutability.

- Existing database technologies already meet the required security, compliance, and performance needs.

Conclusion

Blockchain has evolved from supporting cryptocurrency into a key enterprise technology that drives automation, security, and trust across digital environments and modern software ecosystems.

Blockchain is no longer an emerging trend for executives managing complex corporate systems. It has developed into an impressive architectural tool for creating secure, transparent, and resilient corporate software. Blockchain for business software offers long-term competitive advantages, operational trust, and regulatory confidence when used precisely and purposefully.

As blockchain applications in enterprises continue to mature, organizations that adopt it strategically will define the next era of secure, automated, and trust-driven digital infrastructure.

What is Custom Software Development?

As digital demands grow, many organizations require software that supports their specific workflows and system environments. This need has increased the focus on custom software development as a strategic solution. These solutions are developed to align with business objectives, automate domain-specific processes, and function as a strategic digital asset that evolves with the organization.

Definition of Custom Software

Custom software applications match a customer’s specific expectations. Unlike off-the-shelf products, developers do not create them for general use but tailor them for a particular business or user group.

Key Characteristics

- Tailored: Designed to match specific workflows and business logic.

- Integrated: Compatible with current and legacy systems and works efficiently.

- Scalable: Expand as the number of users, features, and demands from the rest of the system grows.

Why Companies Choose Custom Applications Over Off-the-Shelf Software

When commercially available software cannot support their specific operational requirements, integration landscape, or long-term technology strategy, companies turn to custom applications.

Custom applications, on the other hand, allow organizations to design systems that align with their business logic, integrate seamlessly with existing and legacy systems, scale according to future demand, and maintain complete control over features, security, and data.

Custom-Built Apps vs. Off-the-Shelf Apps

| Feature | Custom-Built Apps | Off-the-Shelf Apps |

|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Benefits of Custom Software

With custom software, leaders can create systems that not only expand current architectures but also easily integrate with legacy systems and facilitate future modernization. Besides, it improves the organization’s security, governance, and control over the technology landscape.

Who Benefits from Custom Software?

- CTOs and CIOs: With custom software, leaders can create systems that not only expand current architectures but also easily integrate with legacy systems and facilitate future modernization.

- CEOs and Business Executives: By enhancing business operations, custom software development enables the implementation of Strategic Initiatives and Digital Transformation.

High-Level Benefits of Custom Software

- Scalability: Custom software can expand as the organization grows, supporting higher user volumes, additional features, increased data loads, and evolving architectural needs.

- Flexibility: Because custom software is designed around specific workflows and business logic, it can be modified or extended as requirements change. Organizations can adapt the system at any stage without being constrained by vendor roadmaps.

- Automation: Custom-made software is the best way to streamline even the most complex business processes by reducing manual work, ensuring process accuracy, and increasing operational efficiency across departments.

- Innovation: Custom-built systems give companies the freedom to add new features, differentiate their products, and explore the latest technologies.

Industry challenges and trends

Software is becoming very important to modern firms for operations, consumer interactions, compliance, and innovation. However, traditional, off-the-shelf solutions have limitations due to changing market dynamics, growing data volumes, and frequent corporate strategy shifts.

Why Companies Struggle with Off-the-Shelf Solutions

- Integration and legacy system challenges: Most firms have their own internal platforms and rely on legacy systems. Off-the-shelf software cannot fully integrate with such environments, which usually results in increased manual tasks and system complexity due to process changes.

- Rising Long-term cost: The initial expenses might be small, but costs will increase with business expansion due to license fees, user-based pricing, and paid add-ons.

- Scaling Issues and Operational Challenges: As businesses grow, off-the-shelf solutions often struggle to handle increasing user numbers, higher system load, and complex data requirements. As a result, there are issues with performance, reliability, and user experience.

Rapid Change in Feature Requirements

Modern business environments require software to evolve continuously. Customer expectations change quickly, regulatory requirements are frequently updated, and emerging technologies constantly reshape digital operations. Without architectural flexibility, companies are limited in their ability to try out new ideas, change their workflows, and launch different capabilities. This reduces an organization’s agility, weakens its competitive position, and limits long-term technology viability.

Software Development Lifecycle

What is SDLC?

SDLC stands for Software Development Lifecycle. It is the name given to the stages of building and shipping a piece of software – whether that piece of software is a complete flagship product, or just a minor patch. The software development lifecycle comprises much more than just writing and deploying code. It also encompasses user analytics, assessing your existing software, planning, testing, maintenance, and gathering data once the code has shipped to determine where to focus your efforts next.

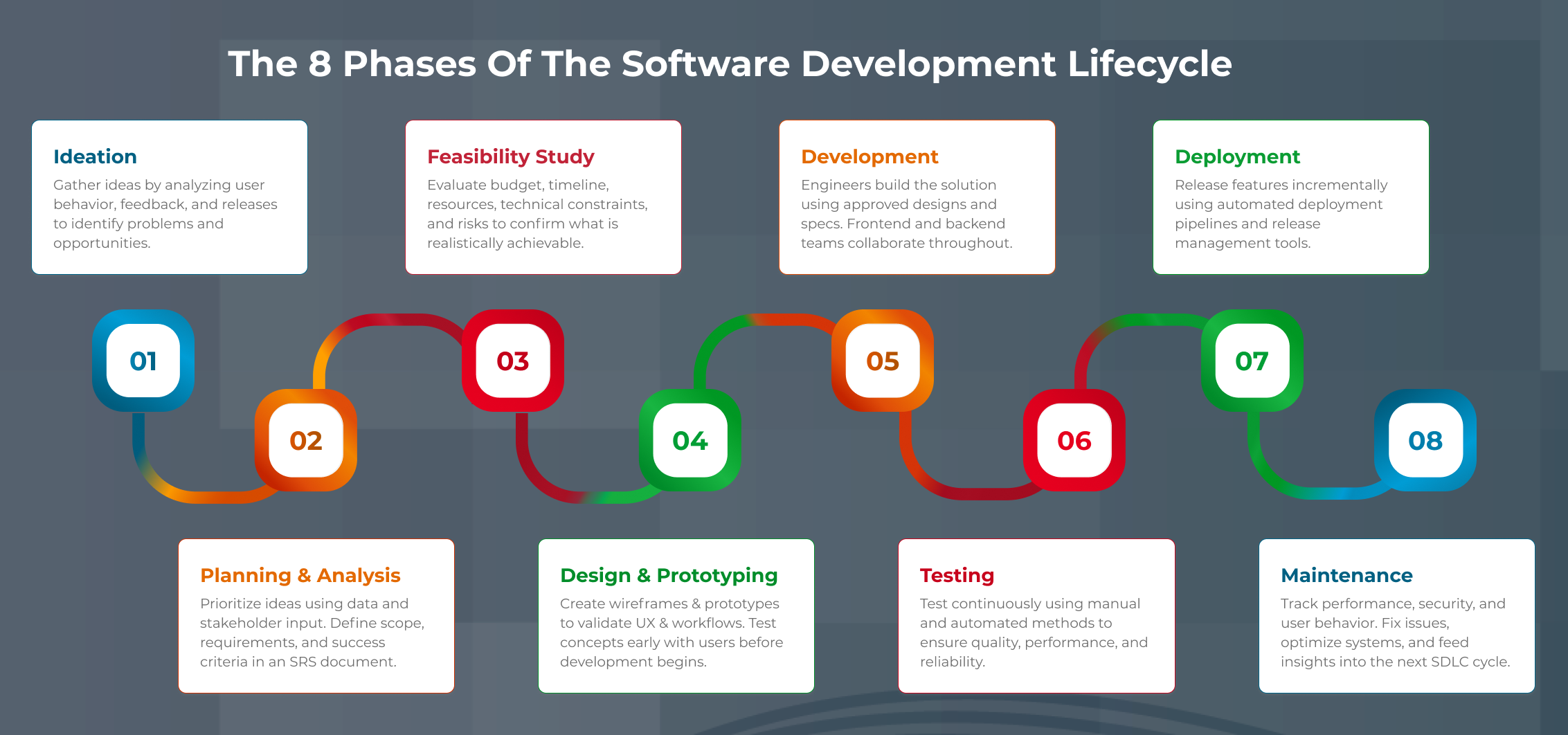

The 8 phases of the Software Development Lifecycle

1. Ideation

Although this is listed as step one, this isn’t actually where the process begins. SDLC is a cycle, and the process really begins at the end of the previous cycle, when you’re monitoring the last piece of software you launched. Observing the way users engage with the software you already have is a big part of figuring out what to build next and is a key component of the ideation step.

This is part of the process for blue-sky thinking. The ideas you come up with may not be concrete or executable, but they should all be considered valid at this stage in the game. Write everything down. This is a brainstorm. The time for whittling things down comes later.

2. Planning and Analysis

Once you’ve generated all your ideas it’s time to start narrowing them down. This is done by analyzing your current system and prioritizing your needs. Data that you’ve gathered from previous cycles, or from tracking user behavior should be used to guide your decisions. Involve all relevant stakeholders in the decision-making process.

At this point the SRS (Software Requirements Specification) document should be generated.

3. Feasibility Study

This is part of the planning process – specifically the part where resource and budget constraints are taken into consideration. You need to consider economic factors, legal concern, logistics, time frame, etc. Use the SRS document you generated in the previous step to support this step.

4. Design and Prototype

The design and prototyping phase typically skew heavily toward front-end design and user experience. You may want to build some wireframes and put them in front of real users to see if your ideas work. If you don’t have the ability to wireframe, do some data-gathering in the form of surveys, or put designs in front of users to get their reactions.

This step is crucial, because it allows you to build a simple interactive version of your product and see how users actually interact with it before you spend time coding, testing, and deploying.

5. Development

Developers and engineers will be given any specs, designs, wireframes, and documents you generated in previous steps and will begin coding the actual software. Ideally, engineers will have been involved in the process long before this point to provide insight into the limitations and feasibility of the designs.

Do not wait until this step to involve engineers! Yes, this is the step where they are most utilized, but their feedback and opinions should be solicited long before they are given specifications to code. This includes front end and back-end engineers.

6. Testing

Testing should really be considered part of the development step, as you shouldn’t wait until development is complete to begin testing. Engineers should be writing unit tests for every module of code they build, and if possible, manual testing should be happening throughout the development process. As soon as something is usable enough to test, start testing it!

Much of the testing process should be automated, and the implementation of automated testing (i.e. what automated testing tools to use, etc.) should be discussed as part of the planning and design process.

7. Deployment and Delivery

As with testing, deployment doesn’t just happen at the end of the process. Features should be deployed incrementally throughout the SDLC, using a suite of ARA (Automated Release Automation) tools. The types of ARA tools to use should be discussed during the planning process and should be continuously evaluated for their efficiency.

8. Monitoring and Maintenance

Work isn’t complete once the code is deployed. Monitoring and maintenance are an ongoing process that never ends as long as a piece of software is live. The good news is that there are plenty of automated tools to aid in this step of the process too.

Monitoring and maintenance involve not just monitoring for breaks and deploying bug fixes, but also tracking user behavior and analyzing data, monitoring performance and security, and using the data gathered from this step to plan the next launch.

Software Requirements

Best Practices for Analyzing Your Software Requirements

Before you can begin building your next piece of software, you’ll need to make a thorough audit of your current system and analyze your needs. You’ll also need to think about reconciling the needs of users with the needs of your business. This is an important part of the planning process, and there are several things you can do to set yourself up for success down the road.

Define business objectives

First of all, figure out what it is you’re hoping to achieve with this software. This sounds obvious, but funnily enough, it’s a step many businesses seem to forget about. Why are you building this software? What concrete KPIs and results do you hope to see? Will it drive higher engagement from your existing users? Attract new users?

Generate income? Determining this ahead of time will have a large impact on the direction your development goes.

What do my users need to do with my application?

The next step is aligning your business goals with the needs of your users. The best way to do this is to talk to your users! Whether that means literally talking to them, gathering data through surveys, or just analyzing user behavior via data that you’re gathering through your existing software.

Software Development Methodology

What is the best software development methodology to choose, and what are the differences?

Words like Agile, Lean, Scrum, and RAD sound like buzzwords (and these days, they kind of are), but they are also useful strategies for building software. In this article, we’re not going to dive into specifics of each type of approach but rather give a broad overview of what a software development methodology is and why it is useful.

What is software development methodology?

A software development methodology is simply an approach to building software. Most popular methodologies today closely follow the SDLC. In fact, the terms for methodologies are sometimes used interchangeably with the software development lifecycle, because methodologies like Agile were actually partially responsible for defining the SDLC as we know it today.

What are the most popular methodologies?

These days, the most popular software development methodologies are:

-

Agile Software Development

Agile software development refers to an iterative process where tasks are broken into 1–4-week sprints, continuous testing is used, and user feedback is consistently gathered. Instead of working on one large project for weeks or months, Agile breaks work into smaller features, releases them quickly, and tests with users to validate direction.

-

Lean Software Development

Lean development emphasizes reducing waste, openness, and communication. Engineers are encouraged not to commit too early to specific ideas and to identify bottlenecks as soon as possible. Teams remain open-minded throughout the cycle, considering all factors before finalizing decisions.

-

Scrum Development

Scrum is built on Agile and is considered one of the most flexible methodologies. Key roles include the Product Owner, Scrum Master, and Development Team. The Product Owner ensures the work aligns with client expectations, the Scrum Master guides the team through the Scrum process, and the Development Team executes the work. Development happens in 4-week sprints, promoting rapid iteration and frequent testing.

Do I have to choose a methodology?

While it’s not necessary to choose a specific methodology, it can be helpful to name your process so that everyone on the team is on the same page about how things will go. Choosing a popular methodology also means you’ll be part of a community that shares the methodology, and you’ll find lots of resources to answer any questions you may have about how to proceed with each stage of development.

Technology Stack

What is a software development tech stack?