Where should we begin on the journey toward transforming patient care? Generally, people are aware of patient portals, mobile prescriptions, and perhaps even the latest generation of imaging diagnostics. However, these are just the beginning of what healthcare technology can do. In fact, the potential of technology to change lives and improve the patient care experience is substantial. Technology integration in healthcare plays a pivotal role in this process, connecting various systems, streamlining workflows, and enabling seamless data sharing, which enhances the overall quality of care.

Redefining Patient Care with Technology Integration

Technology integration in healthcare has the potential to completely transform how we give and receive care. To create innovative solutions that improve patient outcomes, increase productivity, and promote seamless communication across the healthcare system, healthcare software development is critical. Let’s look at how these advancements lead to a healthier future and better patient care:

- Imagine the impact of early cancer detection—identifying stage-one cancer cells when they are still highly treatable.

- Consider a patient with a chronic disease who has glucose or blood pressure monitor to alert them to a potentially life-threatening issue before it escalates and sends them to the hospital, or worst case, they perish.

- Give physicians a comprehensive picture of their patients’ health by providing access to all the resources they need through a single platform that seamlessly integrates medical records, test results, and diagnostic insights.

- Empower clinicians to make faster, better-informed decisions; this degree of technology integration redefines the standard of care and saves lives.

Why are MedTech Integrations So Important?

Traditional healthcare faces many challenges, from data that is highly fragmented to systemic inefficiencies with administrative workflows, communication interruptions, scheduling, reactive vs. preventative care, supply chain, and workforce burnout, to name just a few. These hinder the delivery of high-quality, timely, and cost-effective care. MedTech Integrations address these and many more inefficiencies.

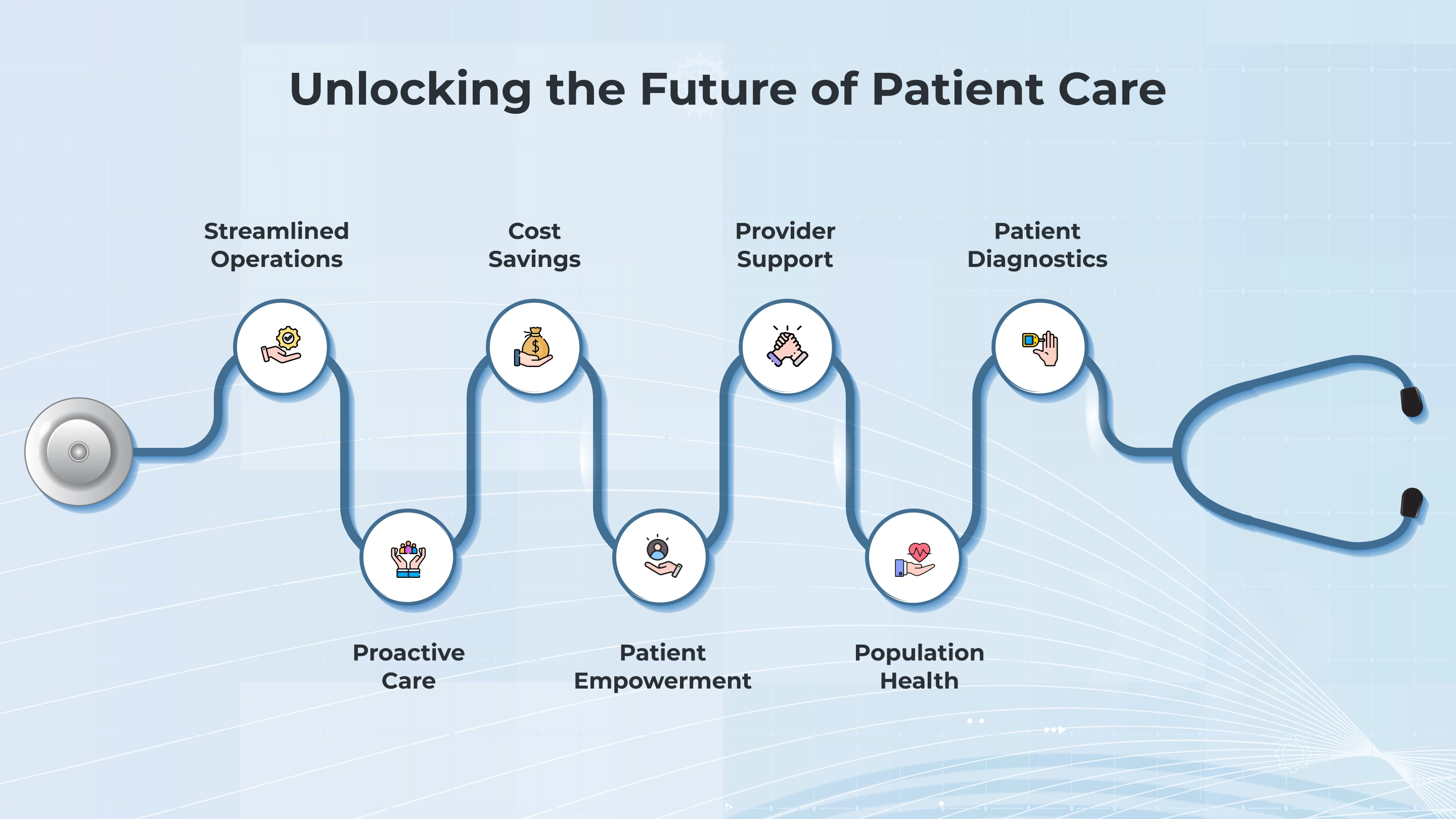

Automating processes, providing real-time insights, and enabling interoperability help to create an efficient, connected, patient-centered, sustainable system. Key benefits include:

-

Streamlined Operations

Automation and interoperability reduce manual paperwork with tools like automated billing, digital scheduling, and e-prescriptions.

-

Patient-Centered Diagnostics and Treatment

Real-time access to data and AI-driven tools to improve accuracy and reduce delays in starting treatment.

-

Proactive Care

Optimizing tools, data integrations, and predictive analytics using RPM (Remote Patient Monitoring), which helps identify and treat issues quickly.

-

Cost Savings

Reducing waste from system redundancies by sharing data across health systems and optimizing the supply chain with inventory tracking ensures resources are used wisely.

-

Patient Empowerment

Connected devices and apps enable and engage patients in managing their health.

-

Provider Support

Workflows are an organization’s lifeblood; improving them with enhanced automation reduces clinician burnout and time.

-

Population Health

So much data is collected nowadays, and it needs to be used to help patients and the community.

Significant Technology Integrations to Improve Patient Care

1. AI-driven diagnostics and Imaging

Are in their infancy. Still, two cases showcase how AI can enhance diagnostic accuracy and speed, ultimately improve patient care and support healthcare professionals in their decision-making process.Two examples of how AI-driven diagnostics are making an impact:

- Overview: Google Health has built an advanced AI model for accurately detecting breast cancer in mammograms. In this model they Google used extensive collection of mammogram images to find patterns associated with cancerous tissues.

- Impact: In clinical evaluations, this AI system performed well when compared human radiologists in both sensitivity (accurately identifying cancer) and specificity (reducing false positives). It also reduced radiologists’ workloads by determining cases that require more examination, thereby enhancing overall diagnostic efficiency.

- Overview: Zebra Medical Vision provides AI-powered tools for analyzing medical imaging data (such as CT scans, MRIs, and X-rays). The company’s platform is designed to detect various diseases, including cardiovascular issues, lung diseases, and cancers.

- Impact: Zebra’s AI algorithms have been integrated into several healthcare systems to assist radiologists in diagnosing and prioritizing cases. Its technology helps detect abnormalities in medical images more quickly and accurately, leading to earlier diagnosis and better patient outcomes.

2. IoMT, RPM and Wearables

Improve care by enabling continuous monitoring and timely interventions with hyper-personal care. Here is the summary of a case that shows the promise of these technologies: the Dexcom Continuous Glucose Monitoring (CGM) System with Remote Patient Monitoring (RPM) has an impact on care which includes:

- Better Management of Diabetes: Patients can reduce the risk of harmful glucose spikes or drops by immediately modifying their diet, exercise, or insulin use by monitoring their blood sugar levels in real-time.

- Early Intervention: By setting up alerts for abnormal glucose levels, healthcare professionals can take action before a patient has a crisis (such as hypoglycemia or hyperglycemia), which lowers the number of hospitalizations and emergencies.

- Improved Patient Engagement: With continuous access to their health information and remote assistance from their care team, patients feel more capable of managing their condition.

IoMT has many benefits, but one that will have a significant impact is reduced hospital visits. By facilitating proactive, ongoing, and remote care, IoMT holds promise for improving the management of medical conditions outside of conventional clinical settings. One important finding is that IoMT improves patient outcomes, lowers hospital admissions, and eases the strain on healthcare systems by transforming care from reactive to proactive. healthcare systems.

3. Remote Patient Monitoring

Is fast becoming a necessary tool for managing chronic illness. AI is being used to detect health problems like heart attacks early so that medical professionals can take action before things get worse. The main advantages are:

- Preventative care and customized alerts

- Integration of Telemedicine After Surgery and After Discharge

- Improved Adherence and Patient Engagement

- Improved Care of the Elderly and Vulnerable Groups

The Future is Bright and Connected

AI in healthcare is poised to energize into a proactive and not reactive ecosystem, offering transformative benefits across the industry. Many of the benefits will include technological improvements from enhancing interoperability and securing health data to advancing diagnostics and streamlining administrative tasks and workflows, AI and all of the MedTech integrations have the potential to create a seamlessly connected healthcare ecosystem. By optimizing efficiency and reducing operational burdens, AI focuses on delivering high-quality patient care while improving overall system performance.

Patient-centered is a big challenge, but if we succeed with this, many areas of in the healthcare sector benefit. Improved access, ongoing coordination, and individualized care are the foundations of a patient-centered healthcare ecosystem. Customized therapies, early detection, and interventions, as well as better patient outcomes and fewer hospitalizations, can all be made possible by AI-driven insights. While lowering errors and duplications, interoperable patient health records contribute to coordinated care. Meanwhile, there are many benefits for empowering patients to use telehealth and remote monitoring with real-time insights and stronger provider connections, to foster engagement and proactive health management.

It’s Only the Beginning!

We all know that MedTech integration can have a big impact on healthcare, but their success depends on how safe, effective, and efficient they are. In order to enhance patient care and community health, it is critical to promote the growth of these technologies among the many stakeholders in the healthcare ecosystem. Interoperability is facilitated by technology integration, and data security plays a key role in this development by guaranteeing that health information is safe and available.

As the industry advances, healthcare technology providers must make a priority robust safeguards while enabling seamless data exchange to build a more connected, secure, and patient-centric healthcare system. This is just the beginning of a transformative journey, with countless opportunities to unlock value.

Software releases are quite frequent, with strong competition, and the user experience for flawless experiences is much higher than ever. A minor defect in a mission-critical application can lead to loss of revenue with a potential of millions, reputational damage, and even regulatory penalties.

This is why QA testing is no longer a “final step” in a software delivery; it’s a crucial part of the development lifecycle that goes from concept to production. According to MRFR, the outsourced software testing market, valued at USD 26.33 billion in 2024, is expected to reach USD 99.37 billion by 2034, growing at a 14.20% CAGR.

Forward-thinking companies are not just looking for a QA testing company to “execute scripts.” They need a partner who can:

- Embed automation testing into CI/CD workflows.

- Apply domain-specific test strategies that meet stringent compliance needs.

- Provide performance testing that scales with user growth.

- Deliver measurable ROI via reduced MTTR and improved release predictability.

What Makes a Great QA Testing Company?

For technical decision-makers, the differentiators go beyond cost and headcount. A strong QA partner,

-

Comprehensive Testing Coverage

A great partner provides end-to-end QA testing services, which involve functional testing, automation testing, performance testing, API testing, mobile app testing, security testing, and usability validation.

-

Domain Expertise

QA without context leads to missed edge cases. Partners with sector-specific knowledge, like HIPAA compliance in healthcare or PCI-DSS in fintech, can design tests that address real-world business and regulatory needs.

-

Agile & DevOps Alignment

Modern QA teams incorporate it seamlessly into Agile and DevOps workflows, running multiple tests in the CI/CD pipelines, enabling a major shift-left practice, and collaborating with developers on defect prevention, not just detection.

-

Scalable Resourcing

Release cycles aren’t stable; the right QA testing company can scale up teams during product launches or down during maintenance periods without compromising delivery quality.

-

Advanced Tooling & Frameworks

An expert in leading tools such as Selenium, Cypress, JMeter, Postman, and Appium is mandated.

-

Proven Metrics & Reporting

Defect density, test coverage, mean time to detect (MTTD), and mean time to repair (MTTR) are critical benchmarks.

Top 10 QA Testing Companies in the USA

While the USA has a thriving QA outsourcing market, some companies stand out for their technical capability, industry focus, and ability to deliver quality at scale.

-

Telliant Systems

Services: Full-spectrum QA testing services, including automation testing, performance testing, regression testing, API testing, mobile app testing, functional testing, and security testing

Strengths: Telliant incorporates QA directly into Agile delivery pipelines, ensuring faster feedback cycles and higher defect detection rates. With domain expertise in healthcare, fintech, logistics, and SaaS, Telliant perfectly embraces the industry knowledge with technical precision.

Highlight: For a healthcare SaaS provider, Telliant reduces the release cycle time while maintaining strict HIPAA compliance, which results in a faster go-to-market and reduced defects. Holding a 4.9 Clutch rating, the team is praised by clients for their responsiveness and project management, with frequent mentions of budget alignment and clear communication.

-

QASource

A small to mid-sized QA testing company that specialises in automation testing and API testing for startups and SMBs. Their offshore delivery model is all about cost efficiency without sacrificing any coverage, especially for web and mobile applications.

-

TestMatick

Highly focused on functional testing, usability, and mobile app testing, TestMatick is optimal for creating custom test plans that are meant for niche SaaS products. They’re a perfect fit for early-stage companies that need to do manual testing.

-

Mindful QA

Mindful QA is totally US-based and remotely operated. Mindful QA offers both manual and automated testing. Their customizable and flexible engagement model suits organizations that need an on-demand QA testing service without any long-term contracts.

-

DeviQA

Specialists in building automation frameworks, DeviQA is typically opted for by smaller software vendors for its ability to ramp up testing coverage quickly during critical release phases.

-

QualityLogic

QualityLogic is best known for performance testing and interoperability validation for IoT and smart device manufacturers. They also offer automation testing for more connected platforms.

-

BugRaptors

In security testing and compliance validation, BugRaptors offers strong capabilities, which they serve primarily to fintech and healthcare clients, with a focus on penetration testing and vulnerability scanning.

-

XBOSoft

Specialising in functional usability testing, XBOSoft strives for healthcare startups and digital health platforms, making sure that regulatory compliance is met alongside quality assurance.

-

TestFort

TestFort provides API testing, cross-browser compatibility testing, and automation for small to mid-sized software development teams, known for fast turnaround on short projects.

-

Abstracta

A boutique performance engineering and QA company, offering load, stress, and scalability testing services for web and mobile applications with a focus on improving user experience under peak load.

Why a Quality Assurance (QA) Tester Is Essential for a Software Development Team?

A QA tester’s role is more than bug hunting. Teams can deploy new features much faster every week, or even daily. But without putting it to the test, every release is a gamble. That’s why having a quality assurance tester step in, acting as the last safeguard between code and customer. To make your business tests and cases even stronger, they simulate user journeys, which guarantees a smooth functionality while sustaining end-user expectations.

Here’s why they are indispensable:

-

Defect Prevention Over Defect Detection

QA testers are incorporated earlier into the software development Life Cycle, reviewing requirements and multiple prototypes to be aware of the flaws even before the code is completed.

-

Safeguarding User Experience

Bugs aren’t specific to technical inconveniences; they reduce the trust factor. At the same time, the QA testers specify the usability, accessibility, and responsiveness, which ensures a smooth orientation across all devices and platforms.

-

Compatibility and Cross-Platform Testing

With users who are accessing products with Android, iOS, Windows, or even macOS, checking compatibility is essential. Testers ensure a smooth and consistent functionality regardless of the environment.

-

Security Validation

QA teams adhere to proper scanning, penetration testing, and compliance checks for standards ISO 27001, HIPAA, and GDPR, which protects businesses from massive costs.

-

Performance Benchmarking

Stress, load, and scalability tests prevent the embarrassment of downtime during peak usage. QA testers simulate thousands of concurrent users to confirm resilience.

-

Supporting Continuous Integration/Continuous Deployment (CI/CD)

Automated regression testing ensures that frequent code pushes don’t unintentionally break existing functionality, enabling rapid yet stable releases.

Why Telliant Systems Could Be Your Ideal QA Partner?

Whenever you need a QA testing company that delivers both speed and precision, Telliant offers the balance of the right technical depth, industry knowledge, and operational flexibility that is mostly demanded by modern enterprises. Clutch.co notes they offer good value for cost, while AmbitionBox rates their workplace 4.5/5, suggesting a positive environment that likely supports strong retention, though exact rates aren’t publicly disclosed.

Telliant QA teams aren’t just a group of people who test; they’re strategic quality advocates who embed throughout the software development lifecycle.

-

Full-Cycle QA Capabilities

Covering functional testing, security testing, performance testing, automation testing, API testing, and mobile app testing.

-

Deep Domain Expertise

Proven track record in healthcare (HIPAA), fintech (PCI-DSS), and other regulated sectors ensures compliance and quality go hand in hand.

-

Flexible Engagement Models

Onshore, offshore, and hybrid models to match client budgets and timelines.

-

Technology Stack Compatibility

Proficient in Selenium, Appium, Postman, Cypress, JMeter, and fully integrated with enterprise CI/CD pipelines.

-

Agile and DevOps Integration

QA is embedded from sprint planning through production monitoring, enabling continuous improvement.

-

Scalability on Demand

Ability to double or triple QA capacity during peak release cycles without impacting delivery timelines. Schedule a QA consultation or request a test strategy assessment.

How to Choose the Right QA Partner for Your Business?

Selecting a QA partner is a strategic decision. Here are the four key considerations to look at:

| Feature / Company | Telliant Systems | QASource | TestMatick | Mindful QA |

|---|---|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

- Service fit: Make sure that the provider covers all necessary testing types, including automation testing, performance testing, security testing, API testing, and functional testing.

- Industry criteria: A QA partner with a relevant domain experience will always anticipate industry-oriented risks and compliance needs.

- Transparent report: Look for clear, frequent updates with actionable insights rather than the generic status reports.

- Scalability: Your partner should be able to pick product launches, seasonal demand, or sudden scaling needs without putting quality at risk.

Choosing the Right QA Partner

At the decision-making stage, businesses are looking for vendors who have strength, not just generic service descriptions. Below is a quick comparison table.

Conclusion

Among various QA testing companies in the USA market, Telliant Systems stands out for having full spectrum testing services, domain expertise, and the ability to deliver quality at the given scale. So, if you are looking to get an end-to-end QA integration or specialised testing experience, getting in touch with Telliant guarantees your releases are much faster, safer, and ready for the demands of the industry standard.

Choosing the right enterprise software development company is a consequential decision. Enterprise systems power ERP and CRM workflows, analytics, and compliance. The wrong partner risks brittle integrations, security gaps, runaway cloud spend, and platforms that don’t scale.

A meaningful comparison between providers is not just about the cost; it’s a long-term assessment of operational performance, security posture, and ability to evolve with your roadmap. That’s why evaluating the enterprise software development firms in terms of key benchmarks is crucial before committing to a multi-year engagement.

What to Look for in an Enterprise Software Development Partner?

In enterprise projects, the margin for error is quite thin. Just beyond meeting functional needs, the partner must ensure a stabilised system, compliance readiness, and seamless integration with existing infrastructure. Based on our experience of leading software transformation programs, here are the six core qualities that make it a high-calibre development firm.

1. Technical Expertise Across Enterprise Stacks

The right solution must have a broad skill set in Java, .NET, Python, and emerging networks, all along with the architectural proficiency in microservices, event-driven systems, and cloud-oriented deployment. For instance, enterprises shaping legacy monoliths do need API-first redesigns with container orchestration, with the help of Kubernetes.

2. Scalability and Resource Flexibility

Enterprise needs can change drastically, from adding specialised DevOps engineers mid-project to all the way to expanding QA teams for compliance testing. A capable firm can revamp resources without disrupting any velocity, attributed to the distributed teams and modular delivery models.

3. Proven Track Record in Complex Integrations

Typically, the enterprise software rarely ever exists in isolation. The ideal partner has a strong portfolio that showcases successful SAP, Salesforce, and Oracle integrations, with proven MTTR and low defect density metrics.

4. Security and Compliance by Design

Leading firms incorporate security in the SDLC, applying OWASP standards, zero-trust architecture, and encryption best practices. Compliance readiness for frameworks such as HIPAA, SOC 2, GDPR, or ISO 27001 is mandated for such industries.

5. Transparent Communication and Governance

Executives are on the lookout for real-time visibility into the project. Partners will use DevOps dashboards, automated sprint reports, and Jira tracking to provide accountability and enable trust.

6. Innovation and Emerging Tech Adoption

The best firms aren’t just implementers, they’re innovators. They can easily leverage AI/ML for any predictive analytics, IoT for much more connected ecosystems, or blockchain for secure transaction systems, which ensures that the solutions are future-ready.

Top 5 Enterprise Software Development Companies

Benchmarking the top providers helps organizations understand the tough and competitive nature. While each of them excels in certain areas, Telliant Systems sets the bar high for technical depth, domain expertise, and client-oriented delivery.

1. Telliant Systems

Telliant is an enterprise software development company that has a proven track record in building scalable, compliance-ready solutions across fintech, healthcare, media, SaaS, and other domains. Known for an API-first architecture approach and CI/CD-driven delivery, Telliant offers flexible onshore, offshore, and hybrid models. The company has deep expertise in HIPAA-compliant healthcare systems, multi-cloud deployments (AWS, Azure, Google Cloud), and microservices replatforming.

2. Cognizant

Cognizant is a renowned leader with strong digital transformation capabilities, especially in integrating enterprise AI and automation into the ERP ecosystems. Their core competency is in managed services and large-scale systems for the Fortune 500 companies.

3. ScienceSoft

ScienceSoft has been in the industry for more than thirty years now, delivering enterprise-grade solutions all across manufacturing, banking, healthcare, and retail. They specialise in CRM customization, data analysis, and cloud-native application development.

4. EPAM Systems

EPAM takes software development to the next level, being specialised in cloud-native engineering and data-driven solutions. They are proficient in Kubernetes-based platforms, big data analytics solutions, and the deployment of custom AI models for enterprise-scale use cases.

5. ThoughtWorks

Thoughtworks strives for a flowing delivery, digital transformation, and organizational shift. Having a strong command of the modern architecture patterns and DevSecOps practices.

How Telliant Systems Stands Out and Why Choose Us?

Several enterprise software development firms deliver quality work. Telliant Systems, on the other hand, delivers a consistent blending of technical excellence with strategic alignment to the client’s objective.

-

Domain Expertise in Regulated Industries

Telliant delivered systems for highly regulated industries, like healthcare, finance, and logistics, that meet HIPAA, PCI-DSS, and GDPR requirements. This includes things like securing patient data (tokenization), keeping audit logs, and managing sensitive financial information.

-

Accelerated Delivery Through Engineering Excellence

Proprietary frameworks, pre-built components, and a mature DevOps pipeline reduce delivery times when compared to industry averages. Clients benefit from faster ROI without compromising quality.

-

Multi-Cloud and Hybrid Architecture Mastery

With certified AWS, Azure, and Google Cloud teams, Telliant holds the ability to design cloud-native systems or hybrid models that optimize budgeted costs, performance, and resilience.

-

Security-First Development

Security is embedded from design to deployment. Using automated vulnerability scans, code reviews, and compliance audits, Telliant scans for vulnerabilities, manages secrets securely, and follows recognized standards like NIST and OWASP to protect systems.

-

Innovation at the Core

Whether it’s about integrating AI-driven analytics, enabling IoT connectivity, or implementing the blockchain for a secure audit trail, Telliant delivers promising solutions that are designed for the integrity of the enterprise.

Conclusion

Going with the right enterprise software development company is one of the most strategic decisions that directly has an impact on scalability, security, and the competitive advantage.

There might be capable providers. Telliant Systems offers:

- A rare combination of technical depth

- compliance expertise

- Innovation-driven delivery.

For enterprises that are seeking a partner that understands the engineering solutions while meeting critical demand, Telliant stands out as a clear leader.

Today’s manufacturing processes rely on a complex network of machinery. From CNCs to assembly line robotics, these systems are the lifeblood of manufacturing – and they need to run reliably. Studies show that unplanned downtime can regularly cost $125,000 per hour, and sometimes as much as $2 million per hour.

Needless to say, anything that prevents machines from experiencing downtime is a highly valuable resource. Predictive maintenance, governed by AI, might just be that resource. Artificial Intelligence (AI) is the key enabler of next-gen predictive maintenance. Let’s explore why it’s a game changer, and how businesses are already leveraging it.

What is Predictive Maintenance?

Predictive Maintenance (PdM) uses real-time data to monitor machines and anticipate equipment failures before they happen. Rather than answering the question “Has this machine failed?” or even “Will this machine fail?” PdM seeks to answer the question “When will this machine fail?”

PdM is different from reactive and preventative maintenance in that it responds to real-time data, rather than an incident, and requires no regular scheduling.

- Reactive Maintenance: Fix the problem after it happens

- Preventative Maintenance: Schedule maintenance at regular intervals

- Predictive Maintenance: Anticipate problems and fix them before they happen

Why AI is a Game-Changer in Predictive Maintenance

While it’s possible to do predictive maintenance without AI (through threshold monitoring, trend analysis, and statistical modeling) it is much less accurate and powerful. It’s also more labor intensive, making it costly.

AI is able to take in and analyze massive amounts of data, uncovering patterns humans may miss. Here are a few things AI in manufacturing enables that are harder to achieve with human intervention alone.

-

Anomaly Detection

AI identifies deviations from normal performance more quickly than humans can, meaning the likelihood that it will intervene before a problem occurs is higher.

-

Failure Prediction

Machine learning models forecast which parts are likely to fail and when. This allows manufacturers to fix only what’s needed, only when it’s needed.

-

Root Cause Analysis

AI systems can pinpoint underlying factors, not just symptoms, meaning that analysis of the root cause can be done before failure, not just after.

-

Prescriptive Insights

Advanced AI not only predicts failure but suggests optimal maintenance actions.

With AI-powered prediction, manufacturers experience lower maintenance costs, increased uptime, and improvements in asset utilization and lifespan.

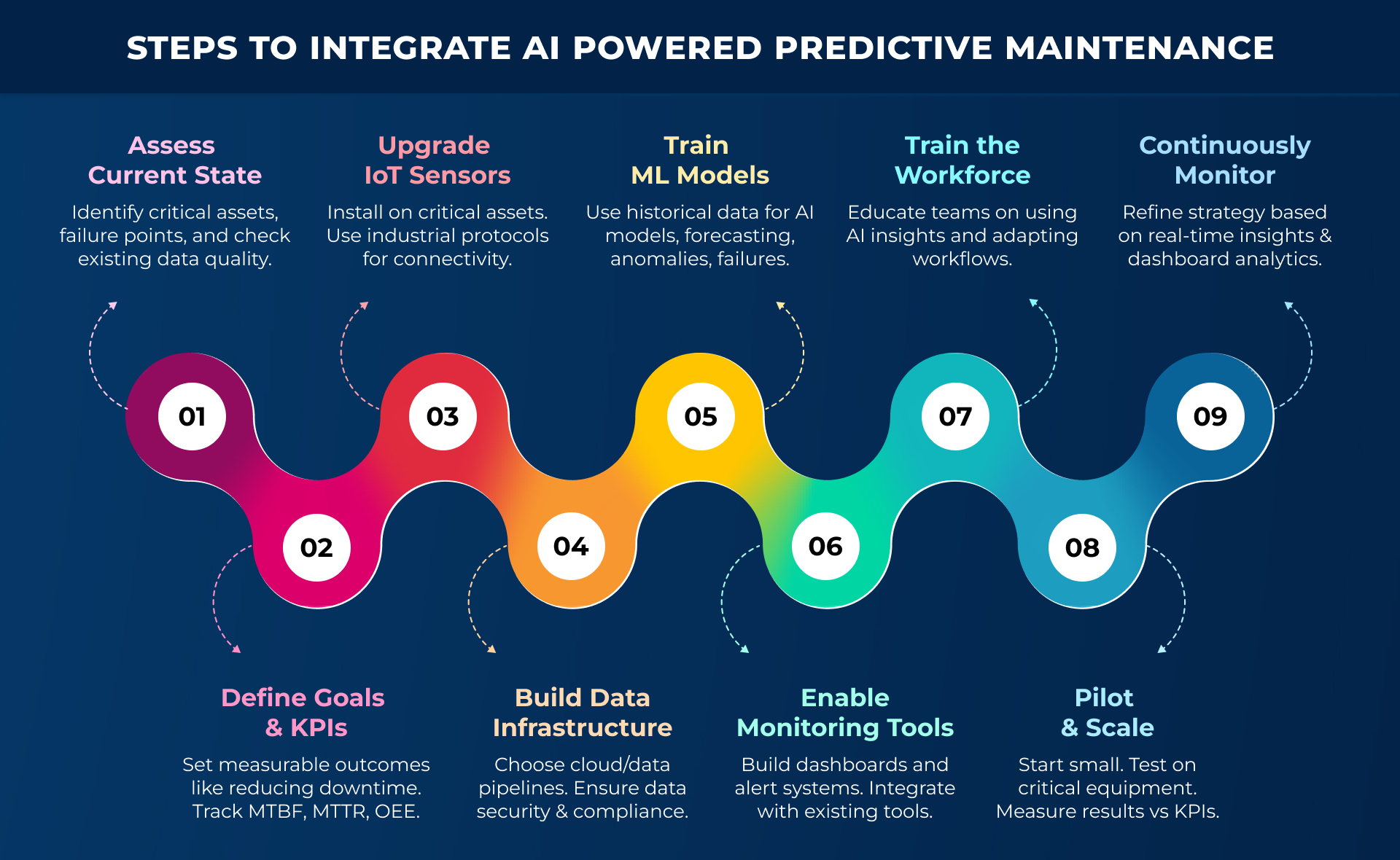

Steps to Integrate AI Powered Predictive Maintenance in Manufacturing

The process of integrating AI-powered PdM into your manufacturing isn’t easy, but it can be done with some up-front planning and careful forethought. Here’s a brief but clear overview of the steps you’ll need to take to get AI-driven PdM into your manufacturing operations.

1. Assess current maintenance and data landscape

You’ll need a clear overview of your current machines and failure points. Identify critical assets that most impact production. Evaluate your current sensors and data quality.

2. Define objectives and KPIs

Set clear goals like “reduce downtime by X% in X timeframe.” Define KPIs such as Mean Time Between Failures (MTBF), Mean Time To Repair (MTTR), and overall equipment effectiveness (OEE).

3. Install or upgrade IoT sensors

If any new sensors are needed, install and configure them. Test to ensure proper functionality. Deploy on critical equipment first. Use industrial protocols like MQTT, OPC-UA for seamless integration.

4. Set up data infrastructure

Decide on a storage solution and upgrade to cloud platforms if need be. Implement pipelines for ingestion, cleaning and normalization. Ensure security and regulatory compliance.

5. Develop or adopt ML models

Use your existing data to train predictive models. Historical failure data and sensor data will help you employ machine learning techniques: anomaly detection, regression, classification, and time-series forecasting.

6. Implement monitoring tools

Create dashboards for asset monitoring and crash visualization. Set thresholds and integrate with existing systems.

7. Train workforce

Perhaps the most important step to foster an environment of collaboration and excitement. Provide training sessions on interpreting AI insights and new maintenance workflows and encourage collaboration.

8. Pilot and scale

Start with a small program on just your most critical machinery. Measure the impact against your KPIs and existing processes.

9. Monitor

Regularly monitor your dashboards and sensors for updates and changes or issues. Use the insights your gather for strategic asset management or investment planning.

Core Technologies Behind AI-Driven Predictive Maintenance

AI-driven PdM relies on a few crucial components and technologies.

-

IoT Sensors & Edge Devices

Temperature, pressure, moisture, vibration, electric current and various other types of sensors collect data from machines as they run. GE’s Predix platform uses IoT sensors installed on turbines to continuously stream data, for example.

-

Cloud Computing / Data Storage

The data collected by IoT sensors and edge devices must be stored so they can be analyzed. Many companies rely on services like AWS to centralize manufacturing data.

-

Analytics

Big data analytics platforms like AWS and IBM Maximo perform efficient data analysis across massive fleets of equipment to detect anomalies.

-

Machine Learning Algorithms

Predictive models forecast failures and also learn from past failure to create robust detection systems. SparkCognition, for example, uses deep learning to predict failures in oil & gas equipment with high accuracy.

-

Automation & Orchestration Tools

Triggering workflows for alerts is a key step in the PdM process. Integration with something like ServiceNow or SAP helps automate work orders based on AI predictions.

Real-World Applications and Use Cases

Companies are already deploying AI-assisted predictive maintenance systems to help them prevent and detect anomalies. Here are a couple of examples of how BMW, Toyota, GE, and Shell are leveraging this powerful tool in manufacturing technology.

-

BMW & Toyota: Preventing Robot Arm Failures in Automotive Engineering

These companies use IoT predictive analytics to monitor vibration and torque in robotic arms and detect early signs of mechanical degradation or motor overheating. This has led to reduced unplanned production line stoppages and improved robotic cell uptime.

-

GE Aviation: Improving Jet Engine Health Monitoring

Jet engines are equipped with hundreds of sensors to monitor temperature, pressure, vibration, and fuel efficiency. In this way, GE uses AI to predict component wear, allowing airlines to schedule maintenance between flights and avoid failures mid-flight.

-

Shell: Monitoring Pipelines and Compressors in Oil & Gas

Shell uses AI models to analyze acoustic and vibration data, allowing them to detect corrosion, pressure leaks, or compressor fatigue. This has prevented pipeline ruptures, reduced emergency interventions, and optimized maintenance cycles.

Conclusion

Using machine learning for equipment monitoring is a powerful way to leverage future-proof technology to improve your bottom line. But it can feel overwhelming to navigate the complexities of manufacturing software development. That’s why a trusted partner like Telliant is critical for your success. Our experienced teams can help you strategize and implement your shift to PdM smoothly.

Contact us today to learn more.

It’s easy to write off buzzwords like “Agile”, “SCRUM”, and “continuous development” as just meaningless hype. But these methodologies are popular for a reason and are becoming the de-facto way to write software for enterprise software modernization.

“Composable architecture” is one of those terms, and if you’ve only ever built monolithic software, you might be tempted to dismiss it. But composable architecture gives you greater agility, innovation, cost benefit, and efficiency, which is why many enterprises are shifting to it now.

What is Composable Architecture?

Composable architecture is a software development principle in which systems are built from “composable” (i.e. modular, interchangeable, discreet) parts. Each component is designed to be self-contained and independently deployable, and to encompass these core principles:

- Modularity: Each part encapsulates specific functionality.

- Reusability: Components can be reused across systems without being changed.

- Interoperability: Components communicate using standard APIs.

- Autonomy: Components are self-deploying and self-scaling.

- Flexibility: The system as a whole supports rapid iteration and experimentation.

Key components of Composable Architecture

The key components of composable architecture are Packaged Business Capabilities (PBCs), Application Programming Interfaces (APIs), microservices, and headless architecture.

-

Packaged Business Capabilities

PCBs are the modular components that make up composable architecture. They are a key concept in MACH (Microservices, API-first, Cloud-native, Headless) architecture, and Gartner’s Composable Enterprise Vision. Each PCB should map to a real business capability (like “consumer identity” or “catalogue”) and should be self-contained, exposing APIs to communicate with other PCBs.

-

Application Programming Interfaces

APIs are the way PCBs communicate. An API simply outlines a standardized set of rules for communicating between two pieces of software. For example, a REST API exposes standard HTTP endpoints that accept GET, POST requests and typically respond with data encoded in JSON.

-

Microservices

Microservices are similar to PCBs, but the concept operates at a different level of abstraction and the definition serves a different audience. Where a business exec might call a certain part of an application a “PCB”, a developer might refer to the underlying code as a “microservice.”

-

Headless Architecture

In headless architecture, the frontend (“head”) is decoupled from the backend. In a traditional system like WordPress, the front and backends are glued together – one cannot operate without the other. In a headless system, a frontend might be built in React, Vue, Angular, or some other frontend language, while a completely separate CMS might manage the backend via an API.

Benefits of Composable Architecture

Composable architecture increases speed and agility, makes maintenance and updates easier, allows for more efficient scaling, and allows teams to operate autonomously.

-

Speed & Agility

Teams can quickly build new features by combining existing parts, meaning a faster time to market. Independent components allow you to replace individual modules without ripping apart the whole system, allowing for easier iteration and improved flexibility.

-

Maintenance & Updates

Rather than deploying an update to your entire system, you can update just a discreet module. You will need to have robust automated regression testing in place to ensure that updates to disparate parts don’t break logic in other places. In addition, smaller, composable parts are easier to maintain, meaning less technical debt for your devs.

-

Scaling

Services like Amazon AWS provide composable pieces of business logic that can be chained together and then managed independently through a simple UI. Scaling each part of the system can be done independently and only as needed (and often, handled automatically by AWS.)

-

Team Autonomy

Teams can be responsible for managing independent parts of the system, working in parallel to build and maintain their respective pieces, while staying in communication about the system as a whole. This means faster iteration, and reduces cross-team dependencies and deployment friction.

Key Drivers for Enterprise Adoption

As digital transformation and customer expectations accelerate, enterprise businesses are hurrying to adopt composable architecture practices. Many teams have discovered a need for greater agility and faster time to market, while others are driven by a desire for reusable components that can be deployed across their systems.

Adopting composable architecture also allows businesses to avoid vendor lock-in, and to set up data flow between services that will support easier implementation of analytics, AI, ML, and other tools becoming prevalent in the modern landscape.

Common Challenges in Composable Architecture and Strategic Solutions

Here are a few of the most frequently experienced pitfalls of implementing composable architecture, and how your business can prepare for and navigate them.

Challenge: Complex Integration

With many services to manage, integration becomes complex and difficult to manage.

Solution: Standardize communication between components (for example, using REST, GraphQL, other standard APIs.) Consider an API gateway like Amazon API Gateway or Kong to manage interoperability. Use event-driven architecture to loosely couple components.

Challenge: Security

Many services mean many entry points into the system, which increases attack surface and exposes many opportunities for security breaches.

Solution: Implement centralized identity and access management using something like AWS IAM, 0Auth, or Okta. Use zero-trust principles and regularly audit APIs to enforce role-based access control.

Challenge: Resistance

Your teams may resist change, particularly if they are used to a monolithic or all-in-one platform.

Solution: Start small. Try out the change with a pilot team and then turn that team into evangelists for the new approach. Align the changes with the value they will bring. Explain clearly why this will benefit not just the business, but devs too.

Key Steps to Shift to Composable Architecture in Enterprise Software

Making the shift to composable architecture requires long-term strategy – it can’t be done all at once. Fortunately, with some up-front planning, you can execute the migration with minimal pain.

Conclusion

If your teams are feeling the need for composable architecture, but you’re unsure where or how to begin, then an experienced software product development partner can guide you through the process. At Telliant, we provide tailored software solutions that can help you not only strategize, design, and implement your shift to microservices, but also maintain, and scale your new system.

Contact us today to find out how our experienced team can help you.

Artificial Intelligence has moved from buzzword to business backbone. What began as a niche area for research and big tech is now powering services across every industry. From automating operations, unlocking insights from data, to personalizing customer experiences, AI is changing how businesses think, build, and compete.

In this article, we’ll dig into the role AI plays in modern organizations: where it’s delivering value, what that means for your business, and how to start integrating it into your organization.

Identifying AI Opportunities

Before you can start integrating AI into your organizational systems, you need to figure out where it actually fits, and where it can deliver the most impact. Here are some common use cases for artificial intelligence, including predictive analytics, natural language processing (NLP) and automation.

- Predictive Analytics

Predictive analytics means using historical data to forecast future outcomes in sales, churn, demand, risk, and more. E-commerce companies can use it to determine how likely customers are to buy, and which ones might become repeat customers. In finance, it can be used to predict loan defaults or prevent fraud, and healthcare companies can use it to anticipate patient readmissions or disease progression.

-

Natural Language Processing (NLP)

NLP is the process of teaching machines to understand and generate human language. It’s widely used in customer service to build things like AI chatbots and automated A help systems, in HR for resume screening and automated candidate matching, and to make websites easier to query through search.

-

Automation

Automation lets AI take over repetitive or rule-based tasks often faster and with fewer errors than a human. It’s hugely useful in operations like invoice processing, report generation, and inventory updates. Marketing companies can automate personalized content generation and do audience segmentation, and IT professionals can use it to scale infrastructure, do anomaly detection, and handle incident response.

Technical Foundations to Support AI Initiatives

Once you’ve established fit and feasibility, it’s important to get familiar with the data, infrastructure and models that power AI. Even if you won’t be implementing it yourself, a broad understanding of these concepts will help you properly manage teams that deal with the technology.

Data Requirements and Eng Considerations

Without the right data, your models won’t work, no matter how sophisticated they are. You need clean, well-labeled, representative data, especially for supervised learning. You also need a lot of it. Preparing your data is 80% of the work, so don’t wait until the modeling phase to do it.

Engineering will need robust pipelines and data warehouses, effective monitoring and logging, and your company will need strict compliance with privacy and security regulations – particularly if you deal with PII (Personally Identifying Information).

Model Selection: Picking the Right Approach

Choosing a model requires matching the right problem to the right technique. There are many techniques and ways to approach AI implementation, and without a basic understanding of the underlying principles, it’s easy to misunderstand these things are merely “buzzwords” or to be swayed into using the wrong thing, simply because it sounds good.

Supervised vs Unsupervised Learning

-

Supervised Learning:

You train the model on labeled data (input + correct answer).

- Use for: Fraud detection, customer churn prediction, sentiment analysis.

- Common algorithms: Linear regression, decision trees, random forests, neural nets.

-

Unsupervised Learning:

You feed the model only input data, no labels, and it finds structure on its own.

- Use for: Customer segmentation, anomaly detection, topic modeling.

- Common algorithms: K-means, hierarchical clustering, PCA.

Deep Learning vs Traditional ML (Machine Learning)

- Traditional ML: Easier to train, interpret, and deploy. Great for tabular data or small-to-mid-sized problems. Sci-kit learn models, for example.

- Deep Learning: Neural networks with multiple layers. Best for unstructured data like text, images, or audio needs massive data and compute power.

Challenges in Integrating AI in Business Processes

Integration is the hardest part of AI adoption. From incompatible systems to latency and performance issues, to scaling, data quality, and maintenance, getting an AI model to work in a real-world system can be tough.

- Data silos and quality

AI needs good, current data. But many companies still rely on legacy data systems that move slowly and make models stale. If your model isn’t keeping up with predictions, it might be time to consider automating your pipelines and moving toward real-time or near-real-time data ingestion.

- Security and access control

AI often involves sensitive data customer info, transactions, internal metrics, and every integration point is a potential security risk. To ensure you don’t accidentally expose data or model endpoints unintentionally, apply least-privilege access, encrypt everything, and monitor endpoints.

- Model interpretability

AI needs to be able to be understood by your teams. If your model is so complex and sophisticated that you can’t explain why it made certain decisions (or at least ask the right questions to figure out why) then you have a problem.

- Interoperability and maintenance

AI needs to integrate with your existing system: CRMs, ERPs, databases, APIs, cloud services. But legacy infrastructure isn’t always ready to handle real-time predictions or continuous data streams. Use lightweight APIs, middleware, or batch processing where real-time isn’t realistic to connect modern ML outputs to old-school systems.

- Governance and Ethical AI

Integrating AI into your stack will not only impact your devs’ day-to-day and your management concerns. It will also affect your legal team. You need to ensure you’re compliant with government regulations, and also that you’re addressing any ethical concerns as they arise.

- Bias Mitigation

At its worst, AI is extremely good at reflecting and even amplifying existing bias in data, then making bad decisions based on that bias. If you discover that you’re deploying models affecting people’s access to jobs, loans, healthcare, or even just content, you need to actively reduce bias.

- Regulation

You also need to stay compliant with emerging AI regulations. The legal landscape around AI is evolving quickly, especially in places like the EU. If you’re building AI in healthcare, finance, hiring, or critical infrastructure, there are existing and proposed regulations you should be aware of.

- AI Act (EU)

The AI Act is proposed legislation that attempts to regulate AI at the system level. It categorizes AI into risk tiers and regulates them according to the risk each tier presents.

- GDPR

The GDPR is existing regulation that is quickly being adapted to accommodate challenges presented by AI. It includes provisions specific to automated decision-making, and requires that you provide the right to explanation, right to object, and data protection by design.

- Bias Mitigation

Telliant’s Approach

By combining strategic insight with technical expertise, Telliant Systems provides a framework for businesses looking to develop AI-enabled software solutions. Our approach emphasizes strategy, design, and maintenance, and has been implemented across various industries, including healthcare, fintech, education, and supply chain management.

We collaborate with you on strategic planning, custom software development, designing the user experience, maintenance, data architecture, integration, and QA to build a comprehensive solution tailored to meet your specific business needs.

Conclusion

Building AI that works in the real world takes more than a model and some data. It takes the right foundation, the right integration, and the right partner. From aligning on process fit and feasibility, to navigating compliance and bias, to designing systems that are explainable and scalable success with AI requires strategy. Telliant’s framework can help you build sustainable, effective AI solutions that will solve real problems and supercharge your business decisions.

Businesses often don’t think about software scalability until it’s too late. It’s challenging to predict your scaling needs upfront, especially for small startups and independent businesses. Unfortunately, poorly scaled applications can create negative user experiences and ultimately cost a business dearly.

In this article, we’ll provide actionable tips for scaling your software and highlight the ways these solutions can be applied to your business.

Key Strategies for Improving Software Scalability

1. Adopt a Microservices Architecture

Microservices architecture is a method of designing a software application using a series of independent services that communicate with each other via APIs. Each microservice is responsible for a different facet of the application’s functionality, such as payments, authentication, or fraud detection.

Adopting microservices architecture allows a company to distribute workloads and integrate continuous development techniques. It makes an application easier to scale, as each microservice can be scaled independently as needed.

Security becomes a concern in microservice architecture as more potential weaknesses are introduced. The increased attack surface can be mitigated by implementing systems like multi-factor authentication and zero-trust architecture to authenticate every request.

2. Implement Horizontal Scaling & Load Balancing

There are three primary types of scaling: horizontal, vertical, and database.

- Horizontal (scaling out)- Expanding capacity by adding more servers or virtual machines to balance the load. Services like AWS and Google Cloud enable automated horizontal scaling based on the required resources.

- Database (optimizing storage)-Improving database performance through sharding, replication, or caching. This reduces response time, but it can increase the risk of data conflicts if not properly managed.

- Vertical (scaling up)- Upgrading the capacity of an existing server by adding more CPU, RAM, or storage. This improves processing power and is easier to implement than horizontal scaling because it requires no increase in network complexity.

These days, horizontal scaling is easily implemented through services like AWS and Google Cloud. With just a few clicks, you can set up these platforms to handle load balancing, session data, and containerization for you. If you prefer self-managed solutions, servers like NGINX and Kubernetes are popular choices.

Automating horizontal scaling allows your business to scale without concern. Instances can be auto-scaled across availability zones and load-balanced globally, allowing network traffic to be routed for high availability and near 100% uptime, improving software scalability.

3. Optimize Database Performance

There are many ways to optimize database performance. Utilize database sharding and partitioning to distribute data efficiently and implement indexing and query optimization to minimize bottlenecks. Businesses can also utilize NoSQL databases, such as MongoDB and Cassandra, for high-volume, distributed workloads or implement caching strategies like Redis.

- Indexing- Indexing allows a query to locate a record without having to scan the entire database. Be sure to use the correct index types when indexing queries, and avoid over-indexing, as this can slow down CRUD operations. Additionally, consider setting up indexing analytics to track how your indexes are being utilized and the impact they have on your query speed.

- Sharding and Partitioning- Sharding involves splitting data across multiple server instances and routing data to those instances based on a predefined set of rules. For example, users 1-1000 might go to shard 1, or EU users go to one shard while US users go to another. This improves read and write scalability. Partitioning is the process of creating subdivisions within tables within a single database instance and then updating or requesting data from a specific partition of the table. This enables faster queries on large datasets. Typically, a combination of sharding and partitioning is necessary for optimal performance.

4. Automate Performance Testing

Performance testing is crucial for organizations seeking to maintain a consistently high standard of user experience in high-traffic situations. Load testing, stress testing, spike testing, and endurance testing are all necessary components of a comprehensive testing plan.

Applications like JMeter and Gatling can simulate high-traffic scenarios, and K6 is a popular framework for testing sudden traffic spikes. Locust and Tsung are good choices for endurance and scalability testing. Ideally, these should be adopted as part of a continuous integration (CI) and continuous deployment (CD) cycle to ensure that new updates don’t degrade performance.

5. Leverage Cloud & Serverless Architectures

Cloud-based services, such as AWS Lambda and Azure Functions, enable you to leverage powerful auto-scaling capabilities. Within these platforms, you can improve your ability to scale quickly by implementing containerization through things like Docker or Kubernetes. Containerization enables you to isolate applications and packages, along with their dependencies, so they can be deployed consistently across multiple instances.

Choosing the right cloud model (public, private, or hybrid) is important if you want to leverage these types of services effectively. A public cloud, such as Azure or AWS, offers good general software scalability and cost-effectiveness, while a private cloud (i.e., hosted on your own servers) provides greater control and security. Many businesses today opt for a hybrid cloud model, which combines both public and private clouds for increased flexibility.

Key Takeaways on Software Scalability

If your user base is growing and user demands are pushing your current application’s capacity to its limit, it’s time to think about scaling. It was probably time to think about scaling two years ago, when you launched the company!

Designing with scalability in mind from the start, leveraging cloud and serverless solutions, automating testing, and optimizing database performance will help you scale to meet those growing user demands while maintaining consistent user experience and building trust in your brand.

Good data is crucial to healthcare: it forms the backbone of decision-making, treatment plans, diagnoses, and system optimization. Without access to solid patient data, clinicians would be unable to tailor treatment to patient histories or provide proper care.

These days, real-time data from various sources (EHRs, wearables, etc.) enables faster diagnosis and interventions. Remote Patient Monitoring (RPM) allows for tracking of patients outside traditional clinical settings, increasing the number of data points about a patient, and bypassing potentially faulty data collection methods like self-reporting.

Patients as well as doctors report that remote monitoring systems make them feel more comfortable managing care outside clinical settings.

The Transformative Benefits of Remote Patient Monitoring

When it comes to treating most patient concerns, early detection and timely intervention is crucial. The benefits of chronic disease monitoring cannot be understated, as it is important for both patients and their care providers.

In addition to improving early detection and intervention, remote patient monitoring also improves engagement and compliance with treatment plans. Patients find it easier to adhere to plans when they have the support of a remote monitoring system, and doctors feel more confident that they will be able to step in should a patient fail to stick to an agreed-upon strategy.

Finally, RPM allows for broadened access to care, increasing the number of touchpoints a patient has with the system and their care provider. Checkups are easier to facilitate, especially via telemedicine platforms, and issues and emergencies are easier to detect.

Regulatory and Technical Requirements for RPM Software

RPM software must adhere to strict regulatory requirements to uphold industry standards, legal compliance, data privacy protections, ensuring overall security of healthcare systems.

Regulatory Requirements

Any software that tracks or accesses patient data must comply with HIPAA standards to protect patient privacy and confidentiality. Data must be encrypted, measures must be taken to ensure no unauthorized person gains access to data, and patients must consent to their data being collected, stored, or transferred.

Next, any software or device intended to track patient health must be approved by the FDA. It must also comply with the 21st Century Cures Act, which outlines information blocking provisions to ensure data is not overly restricted and can be shared across systems.

If RPM integrates with telemedicine systems, it must also comply with telehealth regulations at both the state and federal level. It must also align with reimbursement policies, such as those used by Medicare and Medicaid.

Finally, for software operating within the EU, GDPR applies to protect personal data.

Technical Requirements

RPM must meet interoperability standards for data exchange, outlined by the HL7. Ideally, it should use modern standards like FHIR to facilitate the seamless exchange of data between systems. RPM software should also support LOINC codes for lab and clinical measurements.

Next, RPM must utilize modern data security practices, including data encryption, secure APIs, and Multi Factor Authentication.

The user experience of an RPM is paramount to its success. A user-centric design that is intuitive for both patients and care providers is critical. So too is compatibility with various devices, including smartphones, tablets, and computers.

To allow for scalability, RPMs should be built on cloud storage, with a robust system for redundancy and backup. Data analytics and monitoring should be deployed across the whole network to monitor for outages and potential attacks.

The Future of Remote Patient Monitoring: Beyond Integration

RPM is poised for tremendous growth in the coming decade. Technological advances, particularly in the field of Artificial Intelligence and IoT will continue to shift care toward more patient-centric and digital solutions.

- Increased integration with AI and ML (Machine Learning). By leveraging AI and machine learning to improve analytics, RPM systems will use AI-driven insights to deliver tailored plans based on patient health patterns.

- Expanded use of wearables. Remote monitoring will improve healthcare outcomes for rural and underserved populations who don’t have access to traditional clinical facilities.

- Better chronic disease management. Early detection, continuous real-time monitoring, and improved biometrics will play a pivotal role in managing things like asthma, hypertension, diabetes, COPD, and heart disease.

- Advancements in mental health treatment. Remote monitoring improves outcomes not just for physical concerns: wearables will be able to track symptoms of mental health conditions, and integrations with telehealth systems will improve access to mental health services.

Why Choosing the Right Partner is Crucial for Your RPM Integrations

The success of your Remote patient monitoring system depends not only on the technology but also on your organization’s ability to integrate it smoothly with existing healthcare infrastructures. You must also ensure compliance with regulatory and technical standards and meet both patient and provider needs.

Schedule a meeting to explore how the right partner can support your healthcare software development journey.

Robotic Process Automation (RPA) is the process by which bots are used to automate routine tasks that a human would normally do. In the healthcare space, RPA can be hugely beneficial: streamlining administrative processes, improving efficiency, reducing inaccuracy, and freeing up staff to focus on more important tasks.

Ultimately, RPA leads to enhanced quality of patient care and operational performance as humans are free to spend more time with patients and take more care with their records.

The Problem: Manual Processes Stall Healthcare Operations

We call it “human error” for a reason. And there’s nothing wrong with human error, it’s an unavoidable part of human life. Unfortunately, it can negatively impact healthcare workflows and patient care, sometimes resulting in disastrous outcomes.

Patient registration, insurance verification, claims processing, and data reconciliation are all areas where operational inefficiencies or mistakes can lead to catastrophe. Key areas affected by human error in healthcare operations include:

- Prescription mistakes

- Drug interaction issues

- Diagnostic errors

- Data entry and documentation errors

- Communication breakdowns

- Patient identification issues

- Admin mistakes

What an RPA Solution Looks Like in a Healthcare Setting

Deploying RPA to handle some of the more repetitive or routine tasks that humans sometimes make mistakes can improve patient outcomes for very little overhead. Automating administrative tasks like billing, insurance claims, appointment scheduling, etc can help healthcare providers to save a lot of time and cost, thereby, mainly focusing on patient care.

Key Processes That Can Be Automated Using RPA

Let’s look at some of the more common use cases for RPA in healthcare.

- Patient Registration and Intake: Automating intake procedures can be done in-person and by collecting email correspondence, phone records, online forms, and other sources. This greatly increases the efficiency and accuracy with which patients are admitted.

- Appointment Scheduling: Scheduling appointments and reminders for patients is critical to maintaining adherence to care plans. It also reduces no-show rates, ultimately saving patients and clinics money.

- Claims Processing: The entire claims process can be automated by extracting relevant information from patient records, entering it into billing systems, and submitting claims to insurance companies. This not only reduces human error in medical coding, but also accelerates billing cycles, minimizes claim rejections, and ensures accurate reimbursements.

- Lab and Test Result Management: Bots can automatically collect and sort lab results from various sources (e.g., lab systems, physician offices.) They also update patient records and alert providers when results are available or when anomalies are found. This means faster results, reduced manual effort, and quicker response times.

- Medication Inventory and Administration: Tracking patient medication is a complex and delicate task and keeping clinics well-stocked is important. Improving supply-chain management and accuracy of dispensation are ways in which robotic process automatio can greatly improve this aspect of the healthcare system.

- Record Management: The most obvious and common use cases for RPA in healthcare are record and patient data management. Bots can retrieve and automatically organize patient records, lab results, medical imaging reports, or discharge summaries.

These are just a handful of the ways that RPA can improve patient outcomes and healthcare employee workflows. It can also be used in HR and payroll management, Revenue Cycle Management (RCM), regulatory compliance monitoring, and many other areas.

- Building Cyber Resilience Through Technology Integration: RPA is useful in patient or employee-centered applications and can bolster a system’s cybersecurity. Software can automate security monitoring and threat detection, improve compliance with security standards, automatically back up and recover data, and automate patching of bugs and system crashes.

Automatic deployment and monitoring are considered a standard part of the software product development lifecycle. However, many healthcare organizations still rely on outdated legacy systems that don’t adhere to these standards, leaving them vulnerable to attacks by bad actors.

Knowing the right software solutions to integrate into a custom healthcare software solution can be tricky. Working with the right partner is important to ensure regulatory compliance and technical robustness.

What are the Broader Implications of Healthcare RPA Integration?

Beyond the immediate benefits to patients and employees, healthcare RPA integration can move the entire healthcare industry forward in three key areas.

Scalability

RPA allows an organization to scale without increasing the workforce. Patient volumes and operational demands are growing every day—scaling to meet these demands without RPA may not be logistically feasible for some institutions.

Compliance Support

Keeping up with the rapidly changing landscape of health regulations, privacy regulations, and regulations relating to AI and consumer data is challenging. RPA ensures adherence to regulatory requirements like GDPR and HIPAA by removing the risk of human error. This leads to fewer compliance breaches and helps avoid costly penalties.

Data-Driven Decision Making

RPA can automate the process of collecting and analyzing data from a wide range of sources. This allows for real-time insights into patient health and leads to better decision-making. When combined with generative AI, the possibilities for enhanced decision-making through data explode.

Turning Inefficiency into Opportunity

Robotic Process Automation offers benefits that extend far beyond just streamlining admin. It enables healthcare organizations to scale efficiently and cut costs, while simultaneously enhancing patient care and improving employees’ work experiences.

RPA will no doubt play a critical role in creating more efficient and responsive healthcare in the coming decades.

Telemedicine is no longer optional but has become necessity. Its is quickly becoming the foundation of modern healthcare — as healthcare continues to become more digital, growth of telemedicine platforms is advancing quickly to better address the needs of patient and provider. Indeed, telemedicine has revolutionized patient care and how it is delivered.

According to Forbes, changes in healthcare legislation have largely been responsible for driving advancements in telemedicine, by expanding the scope of services that can be offered (such as in NY and CA), requiring insurance companies to reimburse telehealth services the same way they do in-person visits, and by introducing measures like the Interstate Medical Licensure Compact.

No wonder, telemedicine has become a crucial part of modern medicine and is included in the patient care programs.

Key Strategies for Enhancing Your Telemedicine Platform

If your organization currently offers a limited selection of telemedicine services, or if your offerings are outdated (or even non-existent) then you may be looking into improving your platform. Here are some things to keep in mind as you embark on this mission.

If your organization has a limited selection of telemedicine services or outdated offerings, you may be seeking ways to upgrade your telemedicine platform. Key strategies to enhance your platform include:

1. Building a Dedicated Development Team is Important

Don’t try to overhaul your existing system or build one from scratch without a dedicated software development team. A dedicated team can focus exclusively on the needs of your platform and ensure that it’s tailored to meet the demands of telemedicine.

A committed dev team will be able to iterate faster and adapt more quickly to the changing healthcare landscape. In today’s software world, being able to quickly pivot and include new features—like AI-driven diagnostics, enhanced data security, or EHR EMR integration—is not just important: it’s necessary.

A specialized team will have more motivation to focus on long-term vision and strategy, rather than simply building for now. Building flexible, scalable solutions is non-negotiable for long-term success, and a team that knows they’ll be the ones working on this codebase ten years down the road is much more likely to build it better now.

2. Agile Development is Crucial

Similarly, adopting an Agile approach to development will allow you to iterate more quickly and adapt to changing patient and clinic needs. Continuous feedback and analysis of patient data will allow you to build needed features and make useful improvements. Risk can be better managed when automated monitoring and security systems are deployed. Finally, cross-functional collaboration will make integrating with other teams and organizations more seamless.

3. The Role of a Robust Reporting Architecture

Your reporting architecture captures, analyzes, and presents data so that data may be used to drive decisions, build new features, and optimize patient care. Not only that, it improves the overall security of your platform by detecting threats and alerting you to them.

4. Scalability and Performance Enhancements

Consider how this platform will be used in decades to come. What types of integrations should you be prepared to adopt? How can you build for maximum flexibility and scalability now, without sacrificing user-friendliness and patient-centered care?

These are questions better answered by a dedicated dev team, or software partner with years of experience in the telemedicine sector, than by contractors and external employees.

Enhancing Telemedicine Platforms with Remote Patient Monitoring

Remote Patient Monitoring (RPM) is advancing in 2025. As medical wearables become more prevalent and AI allows for better data aggregation and analysis, RPM allows care providers to track patient health in real-time and provide real-time care.

Integrating your telemedicine platform with an RPM solution allows you to reap the benefits of real-time data collection and make those benefits easily accessible to your patients. Some of those benefits include:

- Immediate Alerts

- Patient dashboards for improved patient engagement

- AI-fueled diagnostics

- Better adherence to care plans

- Seamless sharing of data between care providers

- Reduced hospital readmissions

- Personalized treatment plans

- Enhanced chronic disease management

- Support for aging populations

- Support for underserved and remote communities

- Cost savings to both patients and clinics

Meeting the Requirements for Telemedicine in 2025

Before your organization starts planning the development of telemedicine platform, you should spend some time familiarizing yourself and your stakeholders with the regulatory and technical requirements for telemedicine.

Regulatory Compliance

Regulatory compliance will become more critical throughout 2025 as telemedicine solutions become more prevalent. You must ensure your platform meets all the licensing requirements, reimbursement policies, clinical guidelines, and any telehealth-specific regulations laid out at both the state and federal level.

Data Privacy and Security

As consumers become more data-savvy, privacy and safety concerns continue to be of national importance. Compliance with HIPAA and GDPR is just the beginning: you should also ensure your platform meets technical specifications that address common security issues. Multi factor authentication, regular security audits and vulnerability testing are the first steps in securing your patients’ highly sensitive data.

User Experience and Accessibility

On the other side of the technical compliance coin is the user experience. Not only should your app be user-friendly and intuitive for both clients and providers, it must also meet accessibility specifications for individuals with disabilities.

Why Choosing the Right Partner is Crucial for Your Digital Transformation

Partnering with the right software provider is a forward-thinking strategy. You’ll reap the benefits of collaborating with experienced teams and building a strong partnership with an experienced telemedicine software specialist.

The right provider can give you access to cutting-edge technology, top-tier talent, and confidence in your compliance with regulatory requirements. They can help you more easily integrate with existing healthcare systems, and build robust, future-proof systems that are flexible and scalable.

Schedule a meeting to explore how the right partner can support your digital transformation journey.